ΑΙhub.org

AlphaFold advances protein folding research

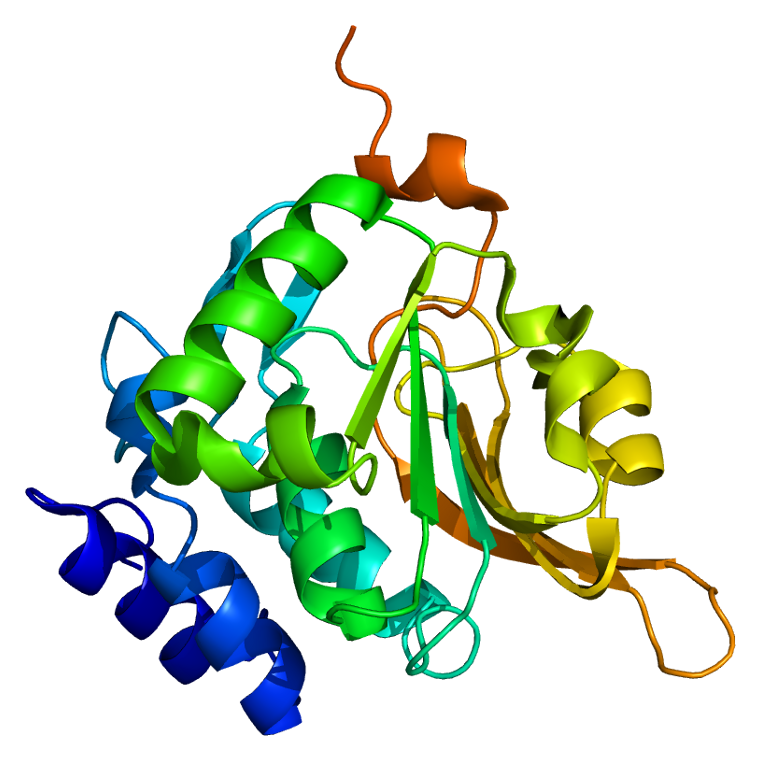

The grand challenge of protein folding hit the news this week when it was announced that the latest version of DeepMind’s AlphaFold system had predicted protein structures with very high accuracy in CASP’s 2020 experiment.

Proteins are large, complex molecules, and the shape of a particular protein is closely linked to the function it performs. The ability to accurately predict protein structures would enable scientists to gain a greater understanding of how they work and what they do.

Protein folding is explained in this video from DeepMind:

How AlphaFold works

This new version of AlphaFold builds on the initial system, which you can read about in this paper. The associated code is available here. In this first version, the team trained a neural network to make accurate predictions of the distances between pairs of amino acid residues (beads in the protein chain), which conveyed information about the structure. Using this information, they constructed a potential of mean force that could accurately describe the shape of a protein. The resulting potential could be optimized by a simple gradient descent.

In version two, the team implemented new deep learning architectures. They created an attention-based neural network system, trained end-to-end, that attempts to interpret the structure of the spatial graph that represents the protein, while reasoning over the implicit graph that it’s building. The system uses evolutionarily related sequences, multiple sequence alignment (MSA), and a representation of amino acid residue pairs to refine this graph.

The system was trained on ~170,000 protein structures from the publicly available protein databank and using large databases containing protein sequences of unknown structure.

About CASP

Critical Assessment of protein Structure Prediction (CASP) is a community-wide experiment for protein structure prediction that has taken place every two years since 1994. CASP provides an independent mechanism for the assessment of methods of protein structure modelling.

For the 2020 experiment, the organisers posted sequences of unknown protein structures for modelling from May to August this year. Protein models from various research groups around the world were then collected and evaluated as the experimental coordinates became available.

The main metric used by CASP to measure the accuracy of predictions is the Global Distance Test (GDT) which ranges from 0-100. GDT can be approximately thought of as the percentage of amino acid residues within a threshold distance from the correct position. This year, the AlphaFold system achieved a median score of 92.4 GDT overall across all targets. For the very hardest protein targets, AlphaFold achieved a median score of 87.0 GDT. This is a significant increase in accuracy compared to previous years (the best GDT in 2018 (using the first version of AlphaFold) was under 60, and in 2016 was around 40).

You can find the abstracts from all of the participating groups from 2020 here.

Find out more:

DeepMind’s blog post: “AlphaFold: a solution to a 50-year-old grand challenge in biology”.

Nature paper published on the first version of AlphaFold: “Improved protein structure prediction using potentials from deep learning”.