ΑΙhub.org

AI transparency in practice: a report

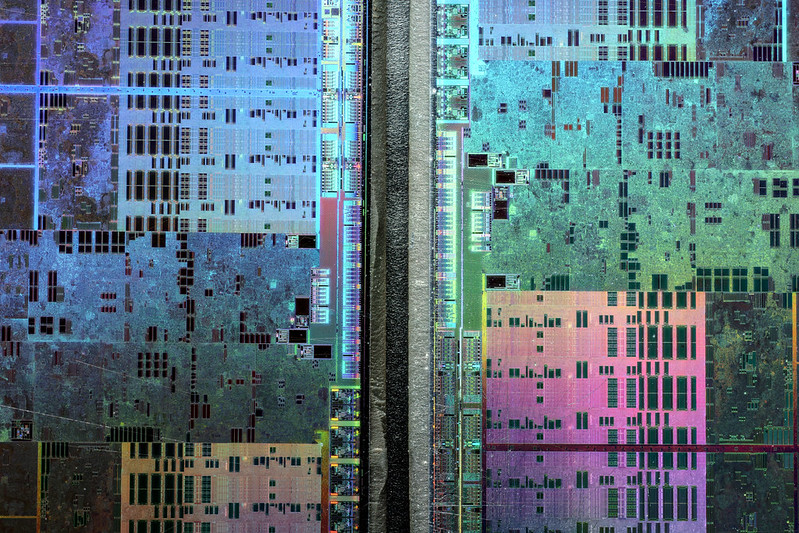

Fritzchens Fritz / Better Images of AI / GPU shot etched 5 / Licenced by CC-BY 4.0

Fritzchens Fritz / Better Images of AI / GPU shot etched 5 / Licenced by CC-BY 4.0

A report, co-authored by Ramak Molavi Vasse’i (Mozilla’s Insights Team) and Jesse McCrosky (Thoughtworks), investigates the issue of AI transparency. The pair dig into what AI transparency actually means, and aim to provide useful and actionable information for specific stakeholders. The report also details a survey of current approaches, assesses their limitations, and outlines how meaningful transparency might be achieved.

The authors have highlighted the following key findings from their report:

- The focus of builders is primarily on system accuracy and debugging, rather than helping end users and impacted people understand algorithmic decisions.

- AI transparency is rarely prioritized by the leadership of respondents’ organizations, partly due to a lack of pressure to comply with the legislation.

- While there is active research around AI explainability (XAI) tools, there are fewer examples of effective deployment and use of such tools, and little confidence in their effectiveness.

- Apart from information on data bias, there is little work on sharing information on system design, metrics, or wider impacts on individuals and society. Builders generally do not employ criteria established for social and environmental transparency, nor do they consider unintended consequences.

- Providing appropriate explanations to various stakeholders poses a challenge for developers. There is a noticeable discrepancy between the information survey respondents currently provide and the information they would find useful and recommend.

Topics covered in the report include:

- Meaningful AI transparency

- Transparency stakeholders and their needs

- Motivations and priorities of builders around AI transparency

- Transparency tools and methods

- Awareness of social and ecological impact

- Transparency delivery – practices and recommendations

- Ranking challenges for greater AI transparency

You can read the report in full here. A PDF version is here.

Lucy Smith

is Senior Managing Editor for AIhub.

Lucy Smith

is Senior Managing Editor for AIhub.

Related posts :

Congratulations to the #AAMAS2026 best paper award winners

Lucy Smith

05 Jun 2026

Find out who won in the categories of best paper, best student paper, and best blue sky paper.

Interview with AAAI Fellow Sanmay Das: multiagent systems

Lucy Smith

04 Jun 2026

We find out more about multi-agent research for the allocation of scarce societal resources.

Design tweaks promote responsible AI use for environmental protection, research shows

Oregon State University

03 Jun 2026

Systems that ask users to pause to consider AI’s energy consumption and environmental impacts are likely to reduce unnecessary AI use

An AI solution to an 80‑year‑old problem has shocked mathematicians

The Conversation

02 Jun 2026

An OpenAI model has been used to find a counterexample to a famous conjecture made by legendary Hungarian mathematician Paul Erdős.

Forthcoming machine learning and AI seminars: June 2026 edition

Lucy Smith

01 Jun 2026

A list of free-to-attend AI-related seminars that are scheduled to take place between 1 June and 31 July 2026.

Image Empire – a new short film from Alan Warburton

Lucy Smith

29 May 2026

An animated fairytale about the fusion of the real and the virtual within contemporary AI models.

monthly digest

AIhub monthly digest: May 2026 – AI for science, the lottery ticket hypothesis, and world models

Lucy Smith

28 May 2026

Welcome to our monthly digest, where you can catch up with AI research, events and news from the month past.

You probably wouldn’t notice if an AI chatbot slipped ads into its responses

The Conversation

27 May 2026

Research suggests AI chatbots could easily be used for covert advertising to manipulate their human users.