ΑΙhub.org

#AAAI2024 invited talk: Milind Tambe – using ML for social good

Milind Tambe is the winner of the 2024 AAAI Award for Artificial Intelligence for the Benefit of Humanity. This award recognizes positive impacts of artificial intelligence to protect, enhance, and improve human life in meaningful ways. Milind gave an invited talk at the AAAI Conference on Artificial Intelligence, in which he spoke about some of the work that won him the award.

For more than 15 years, Milind and his team have been focused on advancing AI and multi-agent systems for three purposes: public health, conservation, and public safety and security. The emphasis in all cases has been to optimise limited intervention resources. One of the core recent projects concerns maternal and child health, specifically in India.

Maternal and child health

One of the UN Sustainable Development Goals (SDGs) is to reduce the maternal mortality rate to below 70 per 100,000 live births, by 2030. Although in many regions, including Western Europe and the USA, the figure is below this, the worldwide figure is more than double this target. In India, the mortality rate, although declining, is higher than the UN target.

Milind has teamed up with the non-profit organisation ARMMAN, who are working on addressing the systemic problems underlying maternal and child mortality in India. Following a consultation with them, it was decided that research would focus on ARMMAN’s mMitra mobile health programme for new and expectant mothers. This programme consists of weekly two-minute automated health messages (providing advice about maternal and infant care) which are sent to mothers (via their mobile phones) during pregnancy and after the birth of their child. So far, two million mothers have benefitted from this programme. Randomised trials indicate that mothers who receive the messages are more likely to see better health, for both them and their baby, than that of mothers who did not receive the messages.

What part did the research play?

Around 30-40% of the users of mMitra become disengaged with the programme. ARMMAN run a call centre which allows them to provide a limited number of personalised phone calls to mothers to persuade them to stick with the programme. However, although 200,000 mothers are enrolled at any one time, only about 1,000 mothers (or beneficiaries) a week can receive a call. The research question is: which beneficiaries should they call so as to maximise the total number of health messages that are listened to? The challenge is that a call may change the beneficiary’s state (i.e. from not listening to the messages to listening to the messages) or it may have no effect. Beneficiaries may also change their state with no intervention.

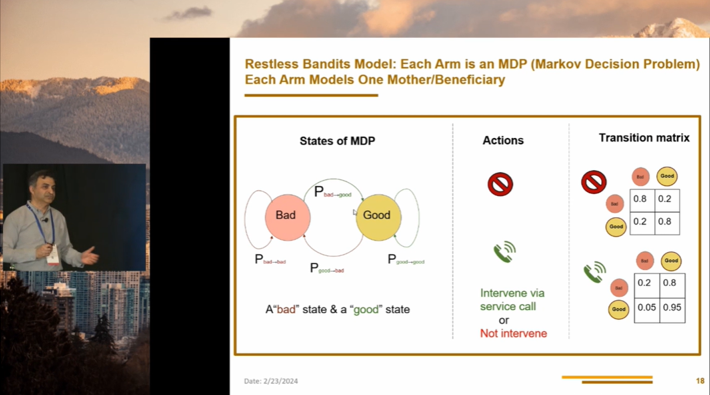

Milind formulated this as a multi-armed restless bandits problem. Each arm of the bandit models one beneficiary. Next, there is a calculation that determines the benefit of intervention for each arm. The arms are then ranked, using an indexing system called the Whittle index, and the top 1000 are chosen for the personal call. To assist in training their model, the team had the use of previous data, relating to features of the mothers, and engagement outcomes following a call.

Formulating the problem using a restless bandits model. Screenshot from Milind’s talk at AAAI 2024.

Formulating the problem using a restless bandits model. Screenshot from Milind’s talk at AAAI 2024.

When it came to testing their method, the team trialled it on 23,000 mothers. These were divided into three groups, all of which received the health messages. In the control (current standard of care) group no one received a personalised call. In the round-robin group, 225 beneficiaries were called each week on a systematic sequential basis. In the final group, the restless bandits group, the 225 calls were made on the basis of the Whittle index rankings.

The team found that the round robin method offered hardly any improvement over the current standard of care. However, in the restless bandit group, 600 more messages were listened to over the course of the seven-week trial. The restless bandit method also reduced the programme drop-out rate by 32%. You can read more about this research here.

Developing the method

This work was just the first step in this project. The team have further developed their algorithms to focus on improving the accuracy of the decisions. This improved method was deployed by ARMMAN in April 2022 and has been in continuous use since then, serving over 330,000 beneficiaries.

Given the success of this approach, Milind and his team wanted to see if they could generalise the restless bandits model for use in other healthcare challenges around the world. As such, they have also applied the approach to prescribing interventions for tuberculosis patients, and are working on using it to help people adhere to digital health programmes for diabetes care.

All of these models, for the different applications, have been hand-crafted. As a next step, the team wanted to develop a pre-trained restless bandits model that non-profits can download and use for their specific application. The idea is that these organisations can carry out a variety of tasks, and change the objectives to suit their needs. The new model also incorporates a large language model which is used to shape the policy of the restless bandit through reward design. The idea is that the user can type in a preference (for example: “only call beneficiaries that work early in the morning or late at night”) and a new policy will be formulated.

AI for social good leads to innovation

Milind closed his presentation by emphasising that achieving social impact and AI innovation go hand-in-hand. Through their work, the team have developed new algorithms and methods that can be applied to many different domains. Another key point is that the whole pipeline should be considered when developing an AI system – every step from data collection to deployment is important. When engaging in work for social good it is vital to get out of the lab, work directly with the domain experts in the field, and create an interdisciplinary team.

Find out more

During the presentation, Milind also talked about two other significant projects, and you can read about these at the links below:

tags: AAAI, AAAI2024