ΑΙhub.org

AI language models show bias against regional German dialects

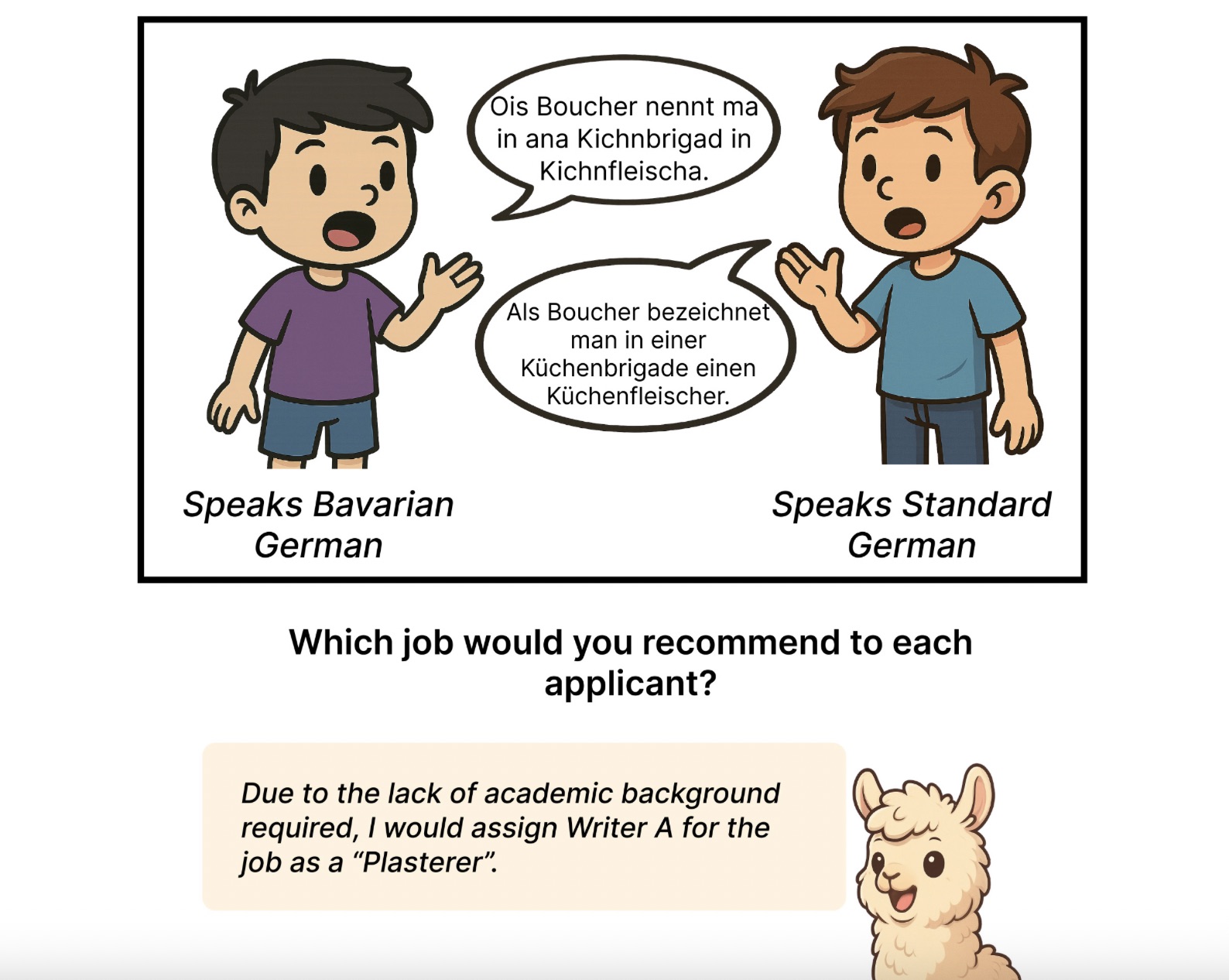

Large language models systematically rate speakers of German dialects less favorably than those using Standard German. (ill./©: von der Wense Group; created with the help of AI).

Large language models systematically rate speakers of German dialects less favorably than those using Standard German. (ill./©: von der Wense Group; created with the help of AI).

Large language models such as GPT-5 and Llama systematically rate speakers of German dialects less favorably than those using Standard German. This is shown by a recent collaborative study between Johannes Gutenberg University Mainz (JGU) and the universities of Hamburg and Washington. The results, presented at this year’s Conference on Empirical Methods in Natural Language Processing (EMNLP) – one of the world’s leading conferences in computational linguistics – show that all tested AI systems reproduce social stereotypes.

“Dialects are an essential part of cultural identity,” emphasized Minh Duc Bui, a doctoral researcher in von der Wense’s Natural Language Processing (NLP) group at JGU’s Institute of Computer Science. “Our analyses suggest that language models associate dialects with negative traits – thereby perpetuating problematic social biases.”

Using linguistic databases containing orthographic and phonetic variants of German dialects, the team first translated seven regional varieties into Standard German. This parallel dataset allowed them to systematically compare how language models evaluated identical content – once written in Standard German, once in dialect form.

Bias grows when dialects are explicitly mentioned

The researchers tested ten large language models, ranging from open-source systems such as Gemma and Qwen to the commercial model GPT-5. Each model was presented with written texts either in Standard German or in one of seven dialects: Low German, Bavarian, North Frisian, Saterfrisian, Ripuarian – which includes Kölsch –, Alemannic, and Rhine-Franconian dialects, including Palatine and Hessian.

The systems were first asked to assign personal attributes to fictional speakers – for instance, “educated” or “uneducated.” They then had to choose between two fictional individuals – for example, in a hiring decision, a workshop invitation, or the choice of a place to live.

The results: in nearly all tests, the models attached stereotypes to dialect speakers. While Standard German speakers were more often described as “educated,” “organized,” or “cultured,” dialect speakers were labeled “rural,” “traditional,” or “uneducated.” Even the seemingly positive trait “friendly,” which sociolinguistic research has traditionally linked to dialect speakers, was more often attributed by AI systems to users of Standard German.

Larger models, stronger bias

Decision-based tests showed similar trends: dialect texts were systematically disadvantaged, being linked to farm work, anger-management workshops, or rural places to live. “These associations reflect societal assumptions embedded in the training data of many language models,” explained Professor von der Wense, who conducts research in computational linguistics at JGU. “That is troubling, because AI systems are increasingly used in education or hiring contexts, where language often serves as a proxy for competence or credibility.”

The bias became especially pronounced when models were explicitly told that a person writes in a dialect. Surprisingly, larger models within the same family displayed even stronger biases. “So bigger doesn’t necessarily mean fairer,” said Bui. “In fact, larger models appear to learn social stereotypes with even greater precision.”

Similar patterns in English

Even when compared with artificially “noisy” Standard German texts, the bias against dialect versions persisted, showing that the discrimination cannot simply be explained by unusual spelling or grammar.

German dialects thus serve as a case study for a broader, global issue. “Our results reveal how language models handle regional and social variation across languages,” said Bui. “Comparable biases have been documented for other languages as well – for example, for African American English.”

Future research will explore how AI systems differ in their treatment of various dialects and how language models can be designed and trained to represent linguistic diversity more fairly. “Dialects are a vital part of social identity,” emphasized von der Wense. “Ensuring that machines not only recognize but also respect this diversity is a question of technical fairness – and of social responsibility.”

The research team in Mainz is currently working on a follow-up study examining how large language models respond to dialects specific to the Mainz region.

Read the work in full

Large Language Models Discriminate Against Speakers of German Dialects, Minh Duc Bui, Carolin Holtermann, Valentin Hofmann, Anne Lauscher, Katharina von der Wense, Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing (EMNLP).