ΑΙhub.org

Making sense of vision and touch: #ICRA2019 best paper award video and interview

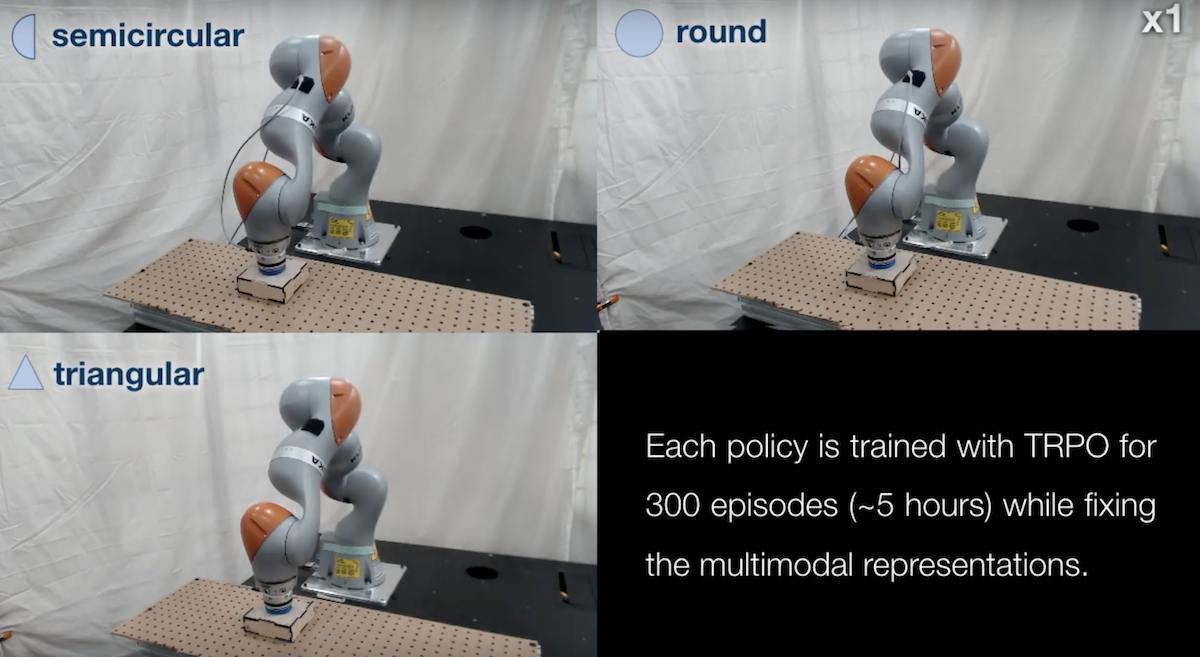

PhD candidate Michelle A. Lee from the Stanford AI Lab won the best paper award at ICRA 2019 with her work “Making Sense of Vision and Touch: Self-Supervised Learning of Multimodal Representations for Contact-Rich Tasks”. You can read the paper on arxiv here.

Audrow Nash was there to capture her pitch.

And here’s the official video about the work.

Full reference

Lee, Michelle A., Yuke Zhu, Krishnan Srinivasan, Parth Shah, Silvio Savarese, Li Fei-Fei, Animesh Garg, and Jeannette Bohg. “Making sense of vision and touch: Self-supervised learning of multimodal representations for contact-rich tasks.” arXiv preprint arXiv:1810.10191 (2018).

AIhub

is dedicated to free high-quality information about AI.

AIhub

is dedicated to free high-quality information about AI.

Related posts :

It’s tempting to offload your thinking to AI. Cognitive science shows why that’s a bad idea

The Conversation

08 May 2026

Increased offloading to new tools has raised the fear that people will become overly reliant on AI.

Making AI systems more transparent and trustworthy: an interview with Ximing Wen

Lucy Smith

07 May 2026

Find out more about Ximing's work, experience as a research intern, and what inspired her to study AI.

Report on foundation model impacts released

Lucy Smith

06 May 2026

Partnership on AI publish a progress report on post-deployment governance practices.

Forthcoming machine learning and AI seminars: May 2026 edition

Lucy Smith

05 May 2026

A list of free-to-attend AI-related seminars that are scheduled to take place between 5 May and 30 June 2026.

AI for Science – from cosmology to chemistry

Ella Scallan

01 May 2026

How AI is transforming science, from a day conference at the Royal Society

monthly digest

AIhub monthly digest: April 2026 – machine learning for particle physics, AI Index Report, and table tennis

Lucy Smith

30 Apr 2026

Welcome to our monthly digest, where you can catch up with AI research, events and news from the month past.

The Machine Ethics podcast: organoid computing with Dr Ewelina Kurtys

The Machine Ethics Podcast

29 Apr 2026

In this episode, Ben chats to Ewelina about the uses of organoids and energy saving computing, differences between biological neurons and digital neural networks, and much more.

#AAAI2026 invited talk: Yolanda Gil on improving workflows with AI

Lucy Smith

28 Apr 2026

Former AAAI president on using AI to help communities of scientists better streamline their research.