ΑΙhub.org

Integrating human behaviour into AI systems

By Lachlan Gilbert

One of the holy grails in the development of artificial intelligence (AI) is giving machines the ability to predict intent when interacting with humans.

We humans do it all the time and without even being aware of it: we observe, we listen, we use our past experience to reason about what someone is doing, why they are doing it to come up with a prediction about what they will do next.

At the moment, AI may do a plausible job at detecting the intent of another person (in other words, after the fact). Or it may even have a list of predefined, possible responses that a human will respond with in a given situation. But when an AI system or machine only has a few clues or partial observations to go on, its responses can sometimes be a little…robotic.

Humans and machines

Dr Lina Yao, a senior lecturer at UNSW Engineering, is principal investigator in a project to get AI systems and human-machine interfaces up to speed with the finer nuances of human behaviour. She says the ultimate goal is for her research to be used in autonomous AI systems, robots and even cyborgs, but the first step is focused on the interface between humans and intelligent machines.

“What we’re doing in these early phases is to help machines learn to act like humans based on our daily interactions and the actions that are influenced by our own judgement and expectations – so that they can be better placed to predict our intentions,” she says. “In turn, this may even lead to new actions and decisions of our own, so that we establish a cooperative relationship.”

Dr Lina Yao. Photo: UNSW

Dr Yao would like to see awareness of less obvious examples of human behaviour integrated into AI systems to improve intent prediction. Things like gestures, eye movement, posture, facial expression and even micro-expressions – the tell-tale physical signs when someone reacts emotionally to a stimulus but tries to keep it hidden.

This is a tall order, as humans themselves are not infallible when trying to predict the intention of another person.

“Sometimes people may take some actions that deviate from their own regular habits, which may have been triggered by the external environment or the influence of another person’s actions,” she says.

All the right moves

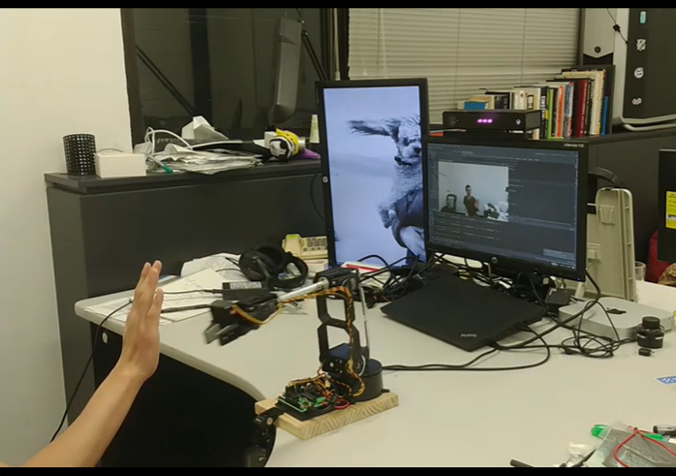

Nevertheless, making AI systems and machines more finely tuned to the ways that humans initiate an action is a good start. To that end, Dr Yao and her team are developing a prototype human-machine interface system designed to capture the intent behind human movement.

“We can learn and predict what a human would like to do when they’re wearing an EEG [electroencephalogram] device,” Dr Yao says.

“While wearing one of these devices, whenever the person makes a movement, their brainwaves are collected which we can then analyse.

“Later we can ask people to think about moving with a particular action – such as raising their right arm. So not actually raising the arm, but thinking about it, and we can then collect the associated brain waves.”

Dr Yao says recording this data has the potential to help people unable to move or communicate freely due to disability or illness. Brain waves recorded with an EEG device could be analysed and used to move machinery such as a wheelchair, or even to communicate a request for assistance. “Someone in an intensive care unit may not have the ability to communicate, but if they were wearing an EEG device, the pattern in their brainwaves could be interpreted to say they were in pain or wanted to sit up, for example,” Dr Yao says.

“So an intent to move or act that was not physically possible, or not able to be expressed, could be understood by an observer thanks to this human-machine interaction. The technology is already there to achieve this, it’s more a matter of putting all the working parts together.”

Partners for life

Dr Yao says the ultimate goal in developing AI systems and machines that assist humans is for them to be seen not merely as tools, but as partners.

“What we are doing is trying to develop some good algorithms that can be deployed in situations that require decision making,” she says.

“For example, in a rescue situation, an AI system can be used to help rescuers take the optimal strategy to locate a person or people more precisely. Such a system can use localisation algorithms that use GPS locations and other data to pinpoint people, as well as assessing the window of time needed to get to someone, and making recommendations on the best course of action. “Ultimately a human would make the final call, but the important thing is that AI is a valuable collaborator in such a dynamic environment. This sort of technology is already being used today.”

But while working with humans in partnership is one thing; working completely independently of them is a long way down the track. Dr Yao says autonomous AI systems and machines may one day look at us as belonging to one of three categories after observing our behaviour: peer, bystander or competitor. While this may seem cold and aloof, Dr Yao says these categories may dynamically change from one to another according to their evolving contexts. And at any rate, she says, this sort of cognitive categorisation is actually very human.

“When you think about it, we are constantly making these same judgements about the people around us every day,” she says.

Read the research:

Motor Imagery Classification via Temporal Attention Cues of Graph Embedded EEG Signals (2020)

Dalin Zhang, Kaixuan Chen, Debao Jian, Lina Yao

A Survey on Deep Learning based Brain-Computer Interface: Recent Advances and New Frontiers (2019)

Xiang Zhang, Lina Yao, Xianzhi Wang, Jessica Monaghan, David McAlpine, Yu Zhang

Deep Learning for Sensor-based Human Activity Recognition: Overview, Challenges and Opportunities (2018)

Kaixuan Chen, Dalin Zhang, Lina Yao, Bin Guo, Zhiwen Yu, Yunhao Liu