ΑΙhub.org

Identifying light sources using machine learning

The identification of light sources is very important for the development of photonic technologies such as light detection and ranging (LiDAR), and microscopy. Typically, a large number of measurements are needed to classify light sources such as sunlight, laser radiation, and molecule fluorescence. The identification has required collection of photon statistics or quantum state tomography. In recently published work, researchers have used a neural network to dramatically reduce the number of measurements required to discriminate thermal light from coherent light at the single-photon level.

In their paper, authors from Louisiana State University, Universidad Nacional Autónoma de México and Max-Born-Institut describe their experimental and theoretical techniques. They demonstrate the potential of machine learning to perform discrimination of light sources at extremely low light levels. This is achieved by training single artificial neurons with the statistical fluctuations that characterize coherent and thermal states of light.

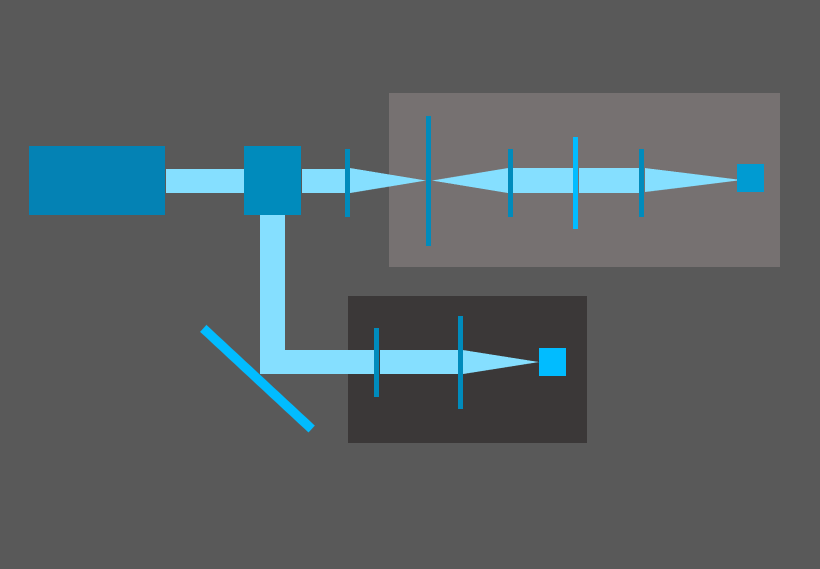

The experimental set-up involves a continuous wave (CW) laser that is divided by a 50:50 beam splitter. Half of the beam passes through optical components which generate pseudo-thermal light. The emerging photons are counted by a superconducting nanowire single-photon detector (SNSPD). The other half of the beam is used as a coherent light source and is detected by another SNSPD. The data are divided in time bins of 1µs; this timeframe corresponds to the coherence time of the laser. The equipment is tuned so that the mean number of photons counted in each bin is below one.

The distributions of photon counts obtained from repeated runs of this experiment were used to train and test an ADALINE neuron and, for comparison, naive Bayes classifier. ADALINE is a single-layer neural network model based on a linear processing element, proposed by Bernard Widrow, for binary classification. It has no hidden layers, simply consisting of inputs and an output neuron.

The researchers also tested different machine learning methods: a) one-dimensional convolutional neural network (1D CNN) and b) a multilayer neural network (MNN). Interestingly, they found that these more sophisticated methods did not significantly affect the classification. They concluded that a simple ADALINE offers a perfect balance between accuracy and simplicity.

The team believe that their work has important implications for multiple photonic technologies, such as LiDAR and microscopy of biological materials. Using their method fewer measurements are needed for classification, enabling researchers to identify light sources much more quickly. In certain applications, such as microscopy, this means that they can limit light damage since they don’t have to illuminate the sample nearly as many times when taking measurements.

Read the research in full

Identification of light sources using machine learning

Chenglong You, Mario A. Quiroz-Juárez, Aidan Lambert, Narayan Bhusal, Chao Dong, Armando Perez-Leija, Amir Javaid, Roberto de J. León-Montiel, and Omar S. Magaña-Loaiza

Applied Physics Reviews (2020)

The work is also posted on arXiv.