ΑΙhub.org

Four ways artificial intelligence is helping us learn about the universe

By Ashley Spindler, University of Hertfordshire

Astronomy is all about data. The universe is getting bigger and so too is the amount of information we have about it. But some of the biggest challenges of the next generation of astronomy lie in just how we’re going to study all the data we’re collecting.

To take on these challenges, astronomers are turning to machine learning and artificial intelligence (AI) to build new tools to rapidly search for the next big breakthroughs. Here are four ways AI is helping astronomers.

1. Planet hunting

There are a few ways to find a planet, but the most successful has been by studying transits. When an exoplanet passes in front of its parent star, it blocks some of the light we can see.

By observing many orbits of an exoplanet, astronomers build a picture of the dips in the light, which they can use to identify the planet’s properties – such as its mass, size and distance from its star. Nasa’s Kepler space telescope employed this technique to great success by watching thousands of stars at once, keeping an eye out for the telltale dips caused by planets.

Humans are pretty good at seeing these dips, but it’s a skill that takes time to develop. With more missions devoted to finding new exoplanets, such as Nasa’s (Transiting Exoplanet Survey Satellite), humans just can’t keep up. This is where AI comes in.

Time-series analysis techniques – which analyse data as a sequential sequence with time – have been combined with a type of AI to successfully identify the signals of exoplanets with up to 96% accuracy.

2. Gravitational waves

Time-series models aren’t just great for finding exoplanets, they are also perfect for finding the signals of the most catastrophic events in the universe – mergers between black holes and neutron stars.

When these incredibly dense bodies fall inwards, they send out ripples in space-time that can be detected by measuring faint signals here on Earth. Gravitational wave detector collaborations Ligo and Virgo have identified the signals of dozens of these events, all with the help of machine learning.

By training models on simulated data of black hole mergers, the teams at Ligo and Virgo can identify potential events within moments of them happening and send out alerts to astronomers around the world to turn their telescopes in the right direction.

3. The changing sky

When the Vera Rubin Observatory, currently being built in Chile, comes online, it will survey the entire night sky every night – collecting over 80 terabytes of images in one go – to see how the stars and galaxies in the universe vary with time. One terabyte is 8,000,000,000,000 bits.

Over the course of the planned operations, the Legacy Survey of Space and Time being undertaken by Rubin will collect and process hundreds of petabytes of data. To put it in context, 100 petabytes is about the space it takes to store every photo on Facebook, or about 700 years of full high-definition video.

You won’t be able to just log onto the servers and download that data, and even if you did, you wouldn’t be able to find what you’re looking for.

Machine learning techniques will be used to search these next-generation surveys and highlight the important data. For example, one algorithm might be searching the images for rare events such as supernovae – dramatic explosions at the end of a star’s life – and another might be on the lookout for quasars. By training computers to recognise the signals of particular astronomical phenomena, the team will be able to get the right data to the right people.

4. Gravitational lenses

As we collect more and more data on the universe, we sometimes even have to curate and throw away data that isn’t useful. So how can we find the rarest objects in these swathes of data?

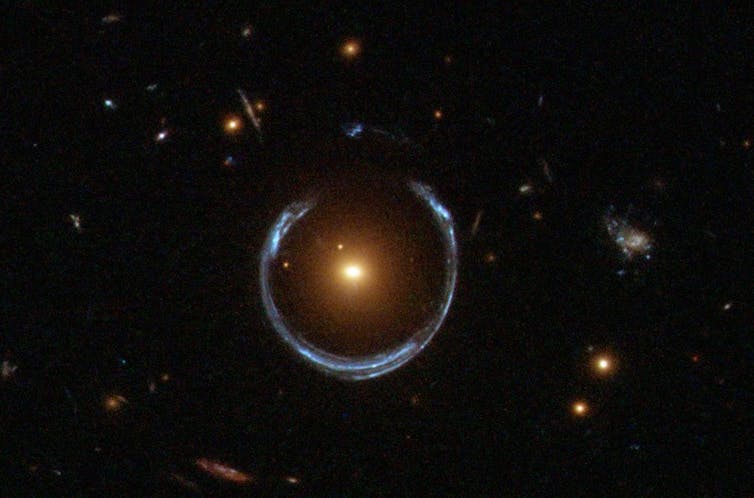

One celestial phenomenon that excites many astronomers is strong gravitational lenses. This is what happens when two galaxies line up along our line of sight and the closest galaxy’s gravity acts as a lens and magnifies the more distant object, creating rings, crosses and double images.

Finding these lenses is like finding a needle in a haystack – a haystack the size of the observable universe. It’s a search that’s only going to get harder as we collect more and more images of galaxies.

In 2018, astronomers from around the world took part in the Strong Gravitational Lens Finding Challenge where they competed to see who could make the best algorithm for finding these lenses automatically.

The winner of this challenge used a model called a convolutional neural network, which learns to break down images using different filters until it can classify them as containing a lens or not. Surprisingly, these models were even better than people, finding subtle differences in the images that we humans have trouble noticing.

Over the next decade, using new instruments like the Vera Rubin Observatory, astronomers will collect petabytes of data, that’s thousands of terabytes. As we peer deeper into the universe, astronomers’ research will increasingly rely on machine-learning techniques.![]()

Ashley Spindler, Research Fellow, Astrophysics, University of Hertfordshire

This article is republished from The Conversation under a Creative Commons license. Read the original article.