ΑΙhub.org

Machine learning technique to help keep your personal data your own

You may not realise it, but without your consent the images you post on social media, including your profile, are harvested and used for training facial recognition systems driven by machine learning.

With this data, over which you now have almost no control, it’s easy for these systems to be used or misused to identify you and your friends perhaps, for example, from CCTV footage.

But what if there was a way to protect your data while still using it freely so that your friends can still see your photos but AI systems are blocked from exploiting these same images?

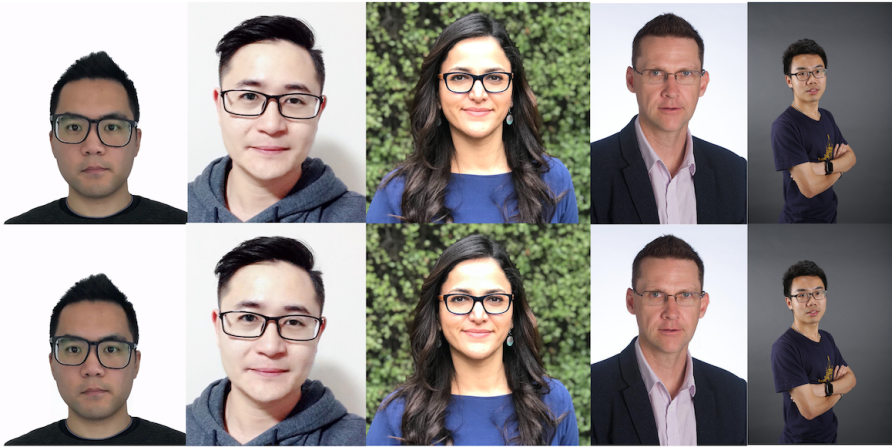

In our new research, we have shown that we can actually do this by using AI against itself to minimally adjust an image that makes it effectively ‘unlearnable’ to AI.

We have devised a machine learning-based technique that identifies and changes just enough pixels in an image to confuse AI, and turn it to an ‘unlearnable’ image. The change is very small and imperceptible to human eyes, but it introduces enough ‘noise’ into an image to make it useless for training AI.

With this technique, you could potentially simply tag your data with unlearnable noise that prevents it being exploited.

Many modern AI systems are based on artificial neural networks that learn to perform a task by repeatedly going through examples – like images of cats, if the AI is learning to identify cats. With each example, the program adjusts its parameters slightly to improve the results. This is what’s meant by ‘training’ AI. Researchers use the term “deep neural network” to differentiate sophisticated modern artificial neural networks.

This deep machine learning is now widely in use – from driving search engines to guiding medical surgery.

A key challenge for training deep neural networks is that the programs usually require a huge volume of data to learn from. The abundant ‘free’ data on the Internet has provided an easy solution to this.

Huge data sets are readily available, like the 80 Million Tiny Images collection and ImageNet, but they pose significant privacy and bias problems.

Our technique takes advantage of a key weakness in deep machine learning models – they are lazy learners. If the model believes that an example does not improve its performance – so, it’s an easy example that it has already learned – it will ignore it.

This weakness motivated the design of our model – our introduced noise fools deep neural networks into believing that there is nothing to learn from the protected images.

In addition, we set a constraint on the noise to ensure it is imperceptible to the human eye. Generally, a person wouldn’t notice a tiny change to an image that is less than 16 pixel values, however, this small change is sufficient to disrupt the model’s learning behaviour.

If someone uses unlearnable data to train deep learning models, the model will perform so poorly that it will be close to just making random guesses on new data.

It should also be possible to use a similar technique to protect other types of data.

Take audio as an example, we could use sound pressure level (or acoustic pressure level) as the metric to measure the difference between the original audio and unlearnable audio.

A small change in sound pressure level is indistinguishable for humans, but this change can make the audio data unlearnable for machines.

It’s early days, but we believe this represents a step change in personal data protection, and there are several potential applications for the technology. For example, you could create unlearnable images before you share them on the Internet, protecting both yourself and your friends.

When a facial recognition model trained with your unlearnable online social media images and your unmodified pictures are captured by CCTV, the facial recognition system will not recognise you anymore.

On a larger scale, companies could release protected proprietary data without worrying it will be used for training deep learning models.

Our work only explores the technical challenges and opens up the possibilities. A lot of work still needs to be done for real-world applications, like developing an app that everyone can use.

But it is feasible.

People have a ‘digital right’ to control their own data. In the same way you can protect your house, you should be able to protect your data.

This article was first published on Pursuit. Read the original article.