ΑΙhub.org

Using reinforcement learning for control of direct ink writing

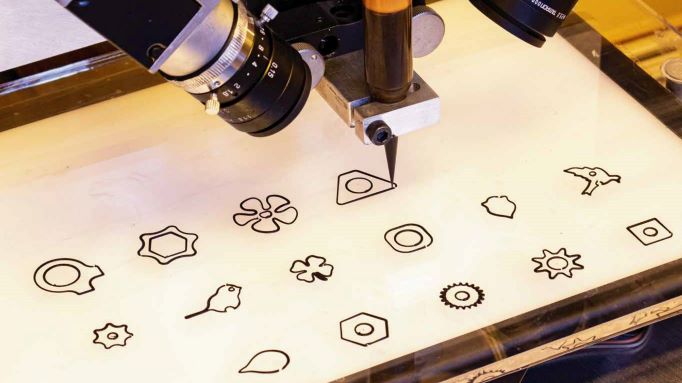

Closed-loop printing enhanced by machine learning. © Michal Piovarči/ISTA

Closed-loop printing enhanced by machine learning. © Michal Piovarči/ISTA

Using fluids for 3D printing may seem paradoxical at first glance, but not all fluids are watery. Many useful materials are more viscous, from inks to hydrogels, and thus qualify for printing. Yet their potential has been relatively unexplored due to the limited control over their behaviour. Now, researchers of the Bickel group at the Institute of Science and Technology Austria (ISTA) are employing machine learning in virtual environments to achieve better results in real-world experiments.

3D printing is on the rise. Many people are familiar with the characteristic plastic structures. However, attention has also turned to different printing materials, such as inks, viscous pastes and hydrogels, which could be potentially be used to 3D-print biomaterials and even food. But printing such fluids is challenging. Exact control over them requires painstaking trial-and-error experiments, because they typically tend to deform and spread after application.

A team of researchers, including Michal Piovarči and Bernd Bickel, are tackling these challenges. In their laboratories at the Institute of Science and Technology Austria (ISTA), they are using reinforcement learning – a type of machine learning – to improve the printing technique of viscous materials. The results were presented at the SIGGRAPH conference, the annual meeting of simulation and visual computing researchers.

A critical component of manufacturing is identifying the parameters that consistently produce high-quality structures. Certainly, an assumption is implicit here: the relationship between parameters and outcome is predictable. However, real processes always exhibit some variability due to the nature of the materials used. In printing with viscous materials, this notion is more prevalent, because they take significant time to settle after deposition. The question is: how can we understand, and deal with, the complex dynamics?

“Instead of printing thousands of samples, which is not only expensive, but rather tedious, we put our expertise in computer simulations to action,” responds Piovarči, lead-author of the study. While computer graphics often trade physical accuracy for faster simulation, here, the team came up with a simulated environment that mirrors the physical processes with accuracy. “We modelled the ink’s current and short-horizon future states based on fluid physics. The efficiency of our model allowed us to simulate hundreds of prints simultaneously, more often than we could ever have done in the experiment. We used the dataset for reinforcement learning and gained the knowledge of how to control the ink and other materials.”

Learning in virtual environments how to control the ink. © Michal Piovarči/ISTA

The machine learning algorithm established various policies, including one to control the movement of the ink-dispensing nozzle at a corner such that no unwanted blobs occur. The printing apparatus would not follow the baseline of the desired shape anymore, but rather take a slightly altered path which eventually yields better results. To verify that these rules can handle various materials, they trained three models using liquids of different viscosity. They tested their method with experiments using inks of various thicknesses.

The team opted for closed-loop forms instead of simple lines or writing, because “closed loops represent the standard case for 3D printing and that is our target application,” explains Piovarči. Although the single-layer printing in this project is sufficient for the use cases in printed electronics, he wants to add another dimension. “Naturally, three dimensional objects are our goal, such that one day we can print optical designs, food or functional mechanisms. I find it fascinating that we as computer graphics community can be the major driving force in machine learning for 3D printing.”

Read the research in full

Closed-Loop Control of Direct Ink Writing via Reinforcement Learning

Michal Piovarči, Michael Foshey, Jie Xu, Timmothy Erps, Vahid Babaei, Piotr Didyk, Szymon Rusinkiewicz, Wojciech Matusik, Bernd Bickel