ΑΙhub.org

AI system for live music accompaniment and improvisation

Electronic music artists and sound designers create complex and unique audio paths by patching modular and standalone synthesizers to achieve a desired sound effect. The process is inherently unpredictable, resulting in sounds that are unique and impossible to replicate, especially in complex audio paths. For this reason, electronic artists often record all of their studio sessions. Electronic artists also often have an organic approach to composition, where they may start with an open mind and find inspiration by playing their instruments. This is in part due to the experimental nature of many electronic instruments and the importance of novel sound effects and textures (e.g., noise and drone) in electronic and electro-acoustic compositions.

As a result of workflows associated with electronic music composition and production, artists can accumulate thousands of hours of studio recordings. In the end, many of these recordings remain unused, as sifting through and listening to hours of recordings trying to find a certain sound is impractical and can take the artists out of their creative flow. Further, when electronic artists are working on new compositions, it can be impossible to recreate a spontaneous sound produced by a modular patch in the past. It is also difficult for the artist to know what electronic sound is needed to compliment a specific musical passage until they hear it.

In our latest work (ArXiv preprint), we envisioned and developed a system, LyricJam Sonic, that uses AI to create a real-time generative stream of music based on an artist's own catalogue of studio recordings. The system can run in a fully autonomous mode, generating a coherent musical composition assembled from 10-second clips of the artist’s past recordings, as well as a stream of generated lyric lines. The purpose is to let the artist get inspiration from potentially unexpected combinations of sounds, and additionally the mood brought about by reading the generated lyric lines.

Alternatively, the artist can play a musical instrument (live performance mode), while the system “listens” and responds in real-time by generating a compatible musical accompaniment based on the clips from the artist's own catalogue. In this mode, the artist can play a new live composition, hear what the system plays in response, and evaluate the clips it finds by their compatibility to the piece they are composing. The process can help artists find the clips they need, without interrupting their creative flow.

The system includes neural networks that interact with each other and generate continuous and ever-evolving stream of music and lyrics. One neural network (variational autoencoder) learns the representations of audio clips based on their spectrograms. Another (conditional variational autoencoder) learns to generate lyric lines conditioned on the representations of spectrograms of the corresponding music clips. Finally, a generative adversarial network (GAN) uses the generated lyric line and previous audio clip to predict the representation of the next audio clip, which is then used by a retrieval module to find the best-matching clip from the artist’s catalogue. The audio clip is then seamlessly integrated using a crossfade into the continuous music stream played in real-time to the artist. In autonomous mode, the predicted clip is then used to generate another lyric line and predict the next audio clip, which continues the process in a self-perpetuating manner. In a live performance mode, the artist plays an instrument, while the system receives audio clips of this performance in real-time. Each clip received from the artist is used to generate a lyric line and predict the next clip in the generative musical accompaniment.

This work builds upon our previous research on LyricJam, a system that generates lyrics based on the audio of live music played by an artist in real-time (ICCC’21). The neural network was trained on an aligned dataset of lyrics and music, which leads it to learn associations between the two modalities. In earlier research (NLP4MusA’20), through user studies and statistical analysis, we found that our neural network architecture is capable of learning emotional associations of lyric lines and their corresponding music clips, which allows it to generate emotionally compatible lines to instrumental music at inference time. For example, the system was found to generate different lyric lines based on spectrograms of ambient vs. rhythm-driven music.

In our latest work, we continued exploring the interdependencies of the two modalities, lyrics and music. Specifically, this time, we were interested in whether the generated lyric lines can be used to find emotionally compatible audio clips from an artist’s catalogue. The best results were obtained when a lyric line is used together with the previous clip to predict the next clip. We found that the effect of the previous clip on the prediction is stronger than the effect of the lyric line, which is desirable, as we would like the next clip to be musically consistent with the previous clip. The lyric line acts more as a nudge towards a certain mood or a musical theme and doesn’t cause a drastic change in the generated music composition.

Analysis of the next clip prediction showed that the system is capable of learning important musical characteristics from spectrograms, such as a musical key, and using it to create a coherent generative composition. We found that the system was consistently able to predict piano clips played in the same key as the previous clip, even if they originate from different source compositions. The musical key sensitivity also translates well across instruments.

Playing strings on a keyboard in a certain key leads the system to create a compatible stream from clips played using different musical instruments (piano, a drone synthesizer and electric guitar), played in the same key.

Isolated audio track of the composition, created by LyricJam Sonic in response to the same live performance.

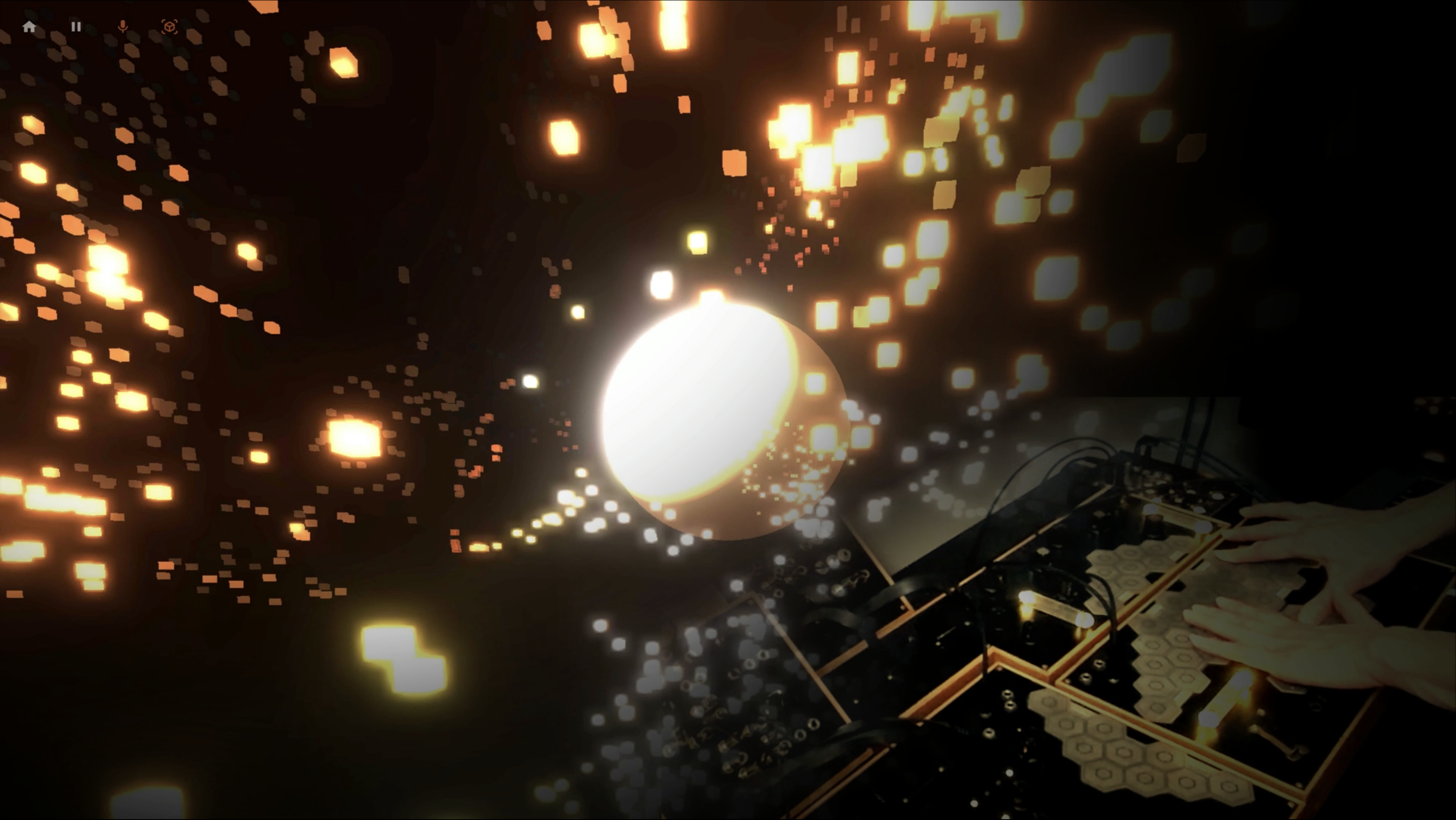

Listening tests showed that compositions created by LyricJam Sonic were perceived by musicians to be more musically coherent than compositions created randomly from the same musical data set. The LyricJam Sonic compositions were created autonomously by the system based on over 4000 10-second clips from the studio recordings of a single electronic music artist. The system, can thus also be used by an artist to create interactive music compositions for their listeners, who can experience ever-changing, never-repeating compositions created in real-time based on the artist’s entire repertoire. For this, we designed an interactive app, which visualizes the audio clips in 3D space, representing the relative position of one clip with respect to all other clips in the multidimensional latent space learned by neural network.

By using the system we discovered that it is capable of learning musical connections between sounds that were non-obvious to us as musicians, and only became clear once we heard them, which opens up a lot of possibilities for artists to discover connections between their various recordings that were not apparent to them.

Users can experience LyricJam Sonic by watching the system autonomously locate the next musically compatible clip, by interactively exploring the 3D visualization to influence the course of the generative musical composition, or by taking part in a live musical performance together with the system as it provides musical accompaniment in real-time.

LyricJam Sonic can be experienced as an interactive web app at LyricJam.ai.

References

Olga Vechtomova and Gaurav Sahu. LyricJam Sonic: A Generative System for Real-Time Composition and Musical Improvisation. ArXiv preprint: arXiv:2210.15638, 2022.

Olga Vechtomova, Gaurav Sahu, and Dhruv Kumar. Lyricjam: A system for generating lyrics for live instrumental music. In Proceedings of the 12th Conference on Computational Creativity, 2021.

Olga Vechtomova, Gaurav Sahu, and Dhruv Kumar. Generation of lyrics lines conditioned on music audio clips. In Proceedings of the 1st Workshop on NLP for Music and Audio (NLP4MusA), pages 33–37, Oct 2020. Association for Computational Linguistics.