ΑΙhub.org

Interview with Rose Nakasi: using machine learning and smartphones to help diagnose malaria

Rose Nakasi and her colleagues have developed a machine-learning method to detect malaria parasites in blood samples. We spoke to Rose about the motivation for this project, the progress so far, and what they are planning next.

Could you tell us about the problem you are trying to solve and how you approached it?

The problem that we are trying to solve concerns the microscopy of malaria diagnosis. The motivation for this research is that malaria is one of the most highly endemic diseases in sub-Saharan Africa, Uganda included. The major problem is that the gold-standard confirmatory test for diagnosis is by use of a microscope, and in our setting, we have a shortage of skilled lab microscopists that are able to carry out the correct diagnosis of the disease. In addition, with the standard operating procedure of the microscope, microscopists are not supposed to look at more than 30 slides in a day (and that is usually thirty patients in a day).

When many patients go to a health facility, instead of the microscopy test, they are given either a diagnosis based on symptoms, or they will be diagnosed using rapid diagnostic tests (RDTs). However, we know that when the disease is at low parasitemia, RDTs often don’t correctly detect the disease. That means that sometimes patients go without a correct diagnosis.

What we’re trying to do is leverage some of the available equipment to solve this problem. Right now, most of the public health facilities have access to smartphones and microscopes. So, based on the availability of that equipment, and also the advancement of technology and artificial intelligence, we wanted to leverage those capabilities to support the current gold standard for the diagnosis of, not only malaria, but also other microscopically diagnosed diseases.

How does the process that you’ve developed work?

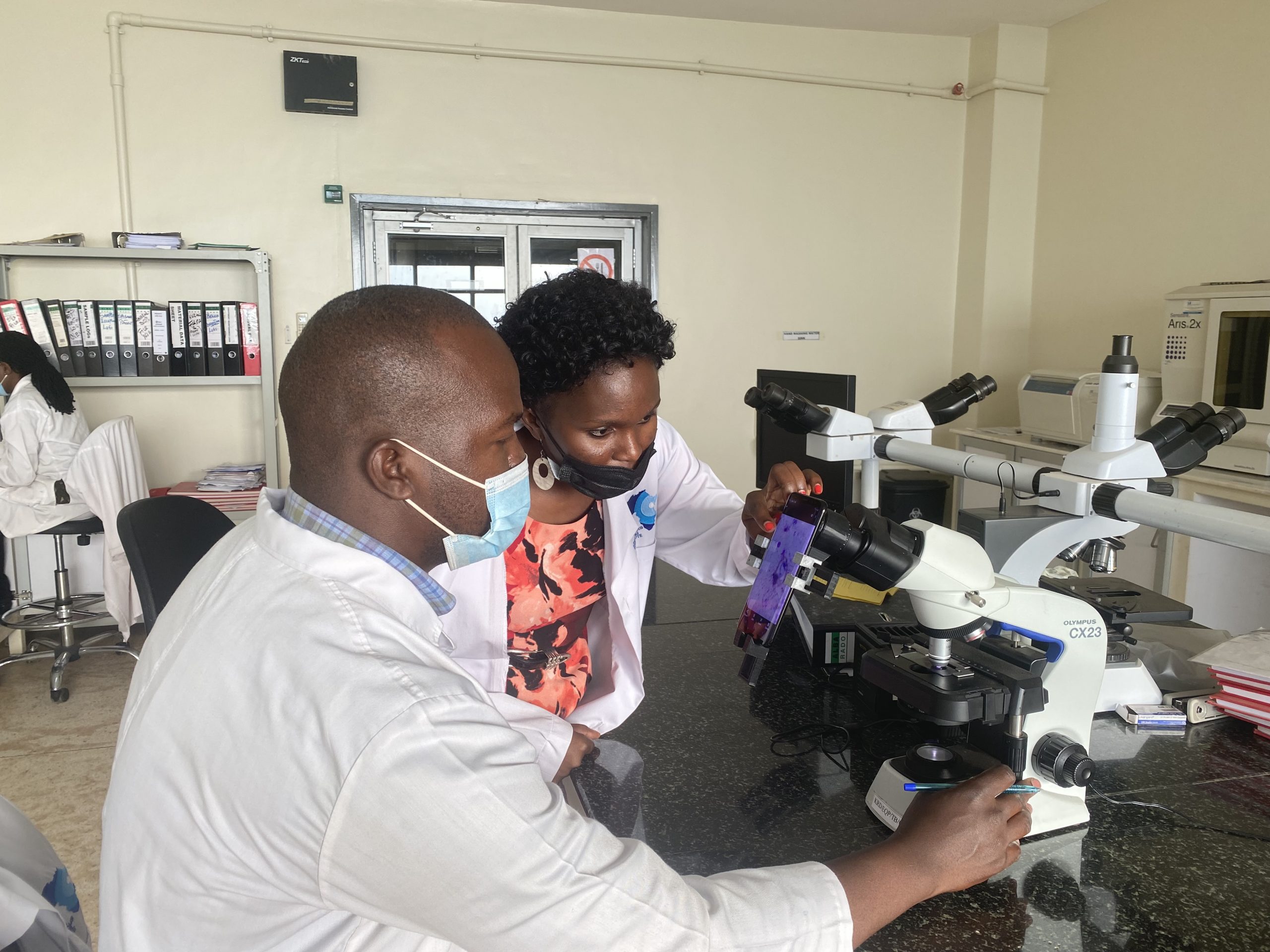

We use thick blood smear samples, which have been taken by microscopists, and then the sample slide is put under the microscope where we capture the detail of the image. We then use a smartphone to take a picture of the microscope slide. These images are used to train machine learning models to identify and classify parasites in the blood sample.

What were the hardware challenges of this research?

In order to capture the images from the microscope on the smartphone, we needed to align the camera of the smartphone with the focal point of the eyepiece of the microscope. That way we don’t lose detail of what’s coming out of the microscope. For this, we had to develop an adapter that we attached to the eyepiece of the microscope. This adapter is 3d-printable.

The microscope and smartphone set up.

The microscope and smartphone set up.

Could you talk a bit about the machine learning methods that you used in the research?

The research has developed over a period of time. We started the work in 2015 and one of our early breakthroughs for this research was in the use of shallow machine learning techniques. Over time we’ve evolved our techniques and now use deep learning, specifically a convolutional neural network (CNN) model which we developed from scratch.

Our first dataset was around 1182 images of the thick blood smears and basically what we are trying to look out for are the parasites. Our task is therefore to detect the parasites in the blood sample. Using the images taken from the microscope, we underwent the process of image annotation to characterize the objects of interest, the parasites. These were classified as positive patches. The other parts not containing the parasites were determined as the negative parts. So that was our image processing technique.

One of the things that we realized was that in such an image you have a bigger number of negative patches (or anything that’s not a parasite) and then a smaller number of parasites, so you see a class imbalance. So, we had to do augmentation to improve the number of positive patches while down-grading (or down-sampling) the number of negative patches. This created a more balanced classification test.

Setting up the microscope

Setting up the microscope

How did you go about testing your approach in a clinical setting?

To collect the dataset and conduct the experiments we have been working closely with the national referral hospital here in Uganda. They were the ones who collected the training dataset for us. For the testing, we went back to the referral hospital, and they were able to collect us a new test dataset. Using that, we were able to test out the model and then compare it to the expert annotations. We found that our model performed really well, and a strong correlation was observed between our model-generated counts and the manual counts done by microscopy experts. We found a Spearman’s correlation of around 0.98.

What are the next steps in the project?

Our AI lab is still working closely with the collaborating lab, but we have not yet tested the models outside this scope. We need to go beyond this scope as we understand that there are different environmental aspects that may affect the performance of our models. We’ve only been working with a few microscopes. In the field, there are many different smartphones, and there are many microscopes, so the environmental set-up of the datasets that we collect for training might actually change. So, the next step would be to standardize those procedures. I believe that this testing will provide many good insights for improvement of the model. This will also help in making it work as a field-based set-up that can be deployed outside the scope of where we are operating right now.

So, presumably this method could be transferred to other diseases?

Exactly, so we have actually wanted to generalize it to other diseases, and we have projects running for a few microscopically diagnosable diseases, for example tuberculosis and intestinal parasites. The idea is that we wanted to check if it can be transferable. We found that the entire pipeline was able to work on datasets for tuberculosis and intestinal parasites. We have just carried out a preliminary study for that, but it would be interesting to try it in different environments. We believe that this pipeline could also support most of the microscopically diagnosed diseases. In addition, any medical image analytics problem that may arise would benefit from this pipeline.

How do the clinicians feel about using machine learning in their day-to-day work?

It’s a very good question because, of course, these particular solutions are so recent, and I know they are disrupting normal procedures. What we do is involve clinicians every step of the way, so from the conception of the problem itself they were on board. We are working with the national referral hospital, which also doubles as a microscopy teaching institute. Before we had even provided our solution, our work was useful to them. The images taken by a smartphone using our set-up could be used to support the teaching classes. Instead of the instructor looking through the microscope and telling the students what to look out for, they were able to project the image so that everyone had the same view of what’s under the microscope.

We’ve also had feedback from clinicians that it’s very easy for them to look at this parasite from the comfort of their chairs as the image is available on their smartphone.

The important thing is involving them and having them appreciate the solution. We also want to assure them the solutions that we develop will not cut them out but rather will support their day-to-day routines. As much as we develop these solutions, the person who gives the final decision on what the model is producing should always be the microscopist – they are the experts. That’s the way we think about these problems and are able to translate them into actionable solutions.

About Rose Nakasi

|

Rose Nakasi holds a PhD of Computer Science from Makerere University. She is a Lecturer of Computer Science as well as a Research Scientist at the Makerere Artificial Intelligence Lab, in Makerere University, Uganda. Rose is also an active member of the Data Science Africa community and a Chair for the Topic Group AI based detection of Malaria (TG-Malaria) at the ITU-WHO Focus Group AI for Health (FGAI4H). Her research interests are in artificial intelligence and data science, and particularly in the use of these for developing improved automated tools and techniques for microscopy diagnosis of diseases like malaria in low-resourced but highly endemic settings. |

|

The AI Around the World series is supported through a donation from the Mohamed bin Zayed University of Artificial Intelligence (MBZUAI). AIhub retains editorial freedom in selecting and preparing the content. |

tags: AI around the world