ΑΙhub.org

AI transparency in practice: a report

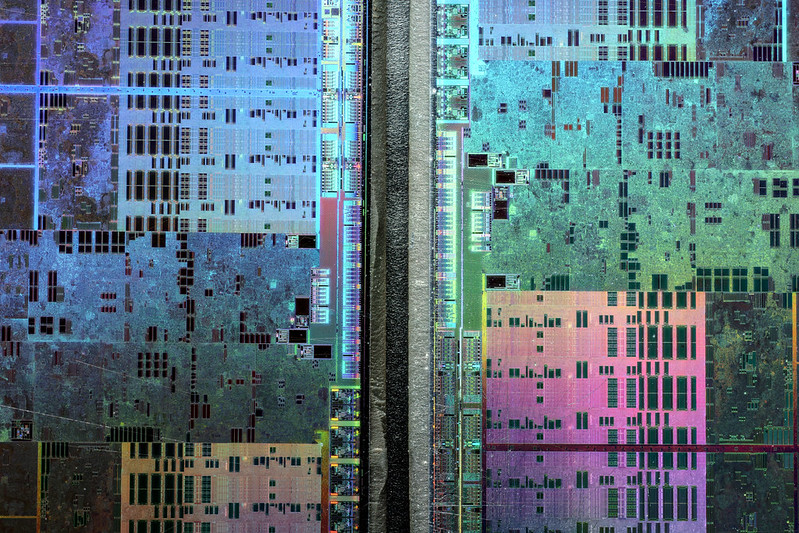

Fritzchens Fritz / Better Images of AI / GPU shot etched 5 / Licenced by CC-BY 4.0

Fritzchens Fritz / Better Images of AI / GPU shot etched 5 / Licenced by CC-BY 4.0

A report, co-authored by Ramak Molavi Vasse’i (Mozilla’s Insights Team) and Jesse McCrosky (Thoughtworks), investigates the issue of AI transparency. The pair dig into what AI transparency actually means, and aim to provide useful and actionable information for specific stakeholders. The report also details a survey of current approaches, assesses their limitations, and outlines how meaningful transparency might be achieved.

The authors have highlighted the following key findings from their report:

- The focus of builders is primarily on system accuracy and debugging, rather than helping end users and impacted people understand algorithmic decisions.

- AI transparency is rarely prioritized by the leadership of respondents’ organizations, partly due to a lack of pressure to comply with the legislation.

- While there is active research around AI explainability (XAI) tools, there are fewer examples of effective deployment and use of such tools, and little confidence in their effectiveness.

- Apart from information on data bias, there is little work on sharing information on system design, metrics, or wider impacts on individuals and society. Builders generally do not employ criteria established for social and environmental transparency, nor do they consider unintended consequences.

- Providing appropriate explanations to various stakeholders poses a challenge for developers. There is a noticeable discrepancy between the information survey respondents currently provide and the information they would find useful and recommend.

Topics covered in the report include:

- Meaningful AI transparency

- Transparency stakeholders and their needs

- Motivations and priorities of builders around AI transparency

- Transparency tools and methods

- Awareness of social and ecological impact

- Transparency delivery – practices and recommendations

- Ranking challenges for greater AI transparency

You can read the report in full here. A PDF version is here.

Lucy Smith

is Senior Managing Editor for AIhub.

Lucy Smith

is Senior Managing Editor for AIhub.

Related posts :

An interview with Nicolai Ommer: the RoboCupSoccer Small Size League

Lucy Smith

01 Jul 2025

We caught up with Nicolai to find out more about the Small Size League, how the auto referees work, and how teams use AI.

Forthcoming machine learning and AI seminars: July 2025 edition

Lucy Smith

30 Jun 2025

A list of free-to-attend AI-related seminars that are scheduled to take place between 1 July and 31 August 2025.

monthly digest

AIhub monthly digest: June 2025 – gearing up for RoboCup 2025, privacy-preserving models, and mitigating biases in LLMs

Lucy Smith

26 Jun 2025

Welcome to our monthly digest, where you can catch up with AI research, events and news from the month past.

RoboCupRescue: an interview with Adam Jacoff

Lucy Smith

25 Jun 2025

Find out what's new in the RoboCupRescue League this year.

Making optimal decisions without having all the cards in hand

Read about research which won an outstanding paper award at AAAI 2025.

Exploring counterfactuals in continuous-action reinforcement learning

Shuyang Dong

20 Jun 2025

Shuyang Dong writes about her work that will be presented at IJCAI 2025.

What is vibe coding? A computer scientist explains what it means to have AI write computer code − and what risks that can entail

The Conversation

19 Jun 2025

Until recently, most computer code was written, at least originally, by human beings. But with the advent of GenAI, that has begun to change.

Gearing up for RoboCupJunior: Interview with Ana Patrícia Magalhães

Lucy Smith

18 Jun 2025

We hear from the organiser of RoboCupJunior 2025 and find out how the preparations are going for the event.