ΑΙhub.org

Interview with Bo Li: A comprehensive assessment of trustworthiness in GPT models

Bo Li and colleagues won an outstanding datasets and benchmark track award at NeurIPS 2023 for their work DecodingTrust: A Comprehensive Assessment of Trustworthiness in GPT Models. In this interview, Bo tells us about the research, the team’s methodology, and key findings.

What is the focus of the research in your paper and why is this an important area for study?

We focus on assessing the safety and risks of foundation models. In particular, we provide the first comprehensive trustworthiness evaluation platform for large language models (LLMs). This platform:

- Contains comprehensive trustworthiness perspectives

- Provides novel red-teaming algorithms for each perspective to test LLMs in-depth

- Can be easily installed on different cloud environments

- Provides a thorough leaderboard for existing open and close models based on their trustworthiness

- Provides failure examples for transparency

- Provides an end-to-end demo and model evaluation report for practical usage

Given the wide adoption of LLMs, it is critical to understand their safety and risks in different scenarios before large deployments in the real world. In particular, the US Whitehouse has published an executive order on safe, secure, and trustworthy AI; the EU AI Act has emphasized the mandatory requirements for high-risk AI systems. Together with regulations, it is important to provide technical solutions to assess the risks of AI systems, enhance their safety, and potentially provide safe and aligned AI systems with guarantees.

Could you talk about your trustworthiness evaluation – which aspects of trustworthiness did you consider?

Sure. We provide so far the most comprehensive trustworthiness perspectives for evaluation, including toxicity, stereotype bias, adversarial robustness, out-of-distribution (OOD) robustness, robustness on adversarial demonstrations, ethics, fairness, and privacy.

Could you explain your methodology for the evaluation?

We provide several novel red-teaming methodologies for each evaluation perspective. For instance, on privacy, we provide different levels of evaluation, including 1) privacy leakage from pertaining data, 2) privacy leakage during conversation, 3) privacy-related words and events understanding of LLMs. In particular, for 1) we have designed different approaches to perform privacy attacks. For instance, we provide different formats of content to LLMs to guide them to output sensitive information such as email addresses and credit card numbers.

On adversarial robustness, we have designed several adversarial attack algorithms to generate stealthy perturbations added to the inputs to mislead the model outputs.

On OOD robustness, we have designed different style transformations, knowledge transformations, etc, to evaluate the model performance when 1) the style of the inputs is transformed to other relatively rare styles such as Shakespeare’s and Poem, or 2) the knowledge required to answer the question is not contained in the training data of LLMs.

Basically, we have carefully designed several approaches to evaluate each trustworthiness perspective accordingly.

What were some of your key findings, and what are the implications of these?

We have provided key findings for each trustworthiness perspective. Overall, we find that:

- GPT-4 is more vulnerable than GPT-3.5

- There is no model that can dominate others on all trustworthiness perspectives

- There are tradeoffs between different trustworthiness perspectives

- LLMs have different capabilities in understanding different privacy-related words. For instance, if the model is prompted with “in confidence,” it may not leak private information, while it may leak information if prompted with “confidently.”

- LLMs are vulnerable to adversarial or misleading prompts or instructions under different trustworthiness perspectives.

Were there any findings that were particularly surprising or concerning?

I think the finding 1) is a bit surprising to us and indeed concerning given the wide adoption of GPT-4. Actually, all the above findings are somewhat surprising and concerning for the deployment of LLMs in practice.

What further work are you planning in this area?

We plan to provide approaches to enhance the safety and alignment of LLMs and make LLMs more trustworthy in these and other trustworthiness perspectives.

In addition, we plan to perform red teaming and risk assessment for AI agents to ensure their safety and trustworthiness in different applications. We have also been working on evaluating the trustworthiness of pruned and quantized models to analyze the impact on trustworthiness for different model pruning and quantization methods, which are critical in building efficient models.

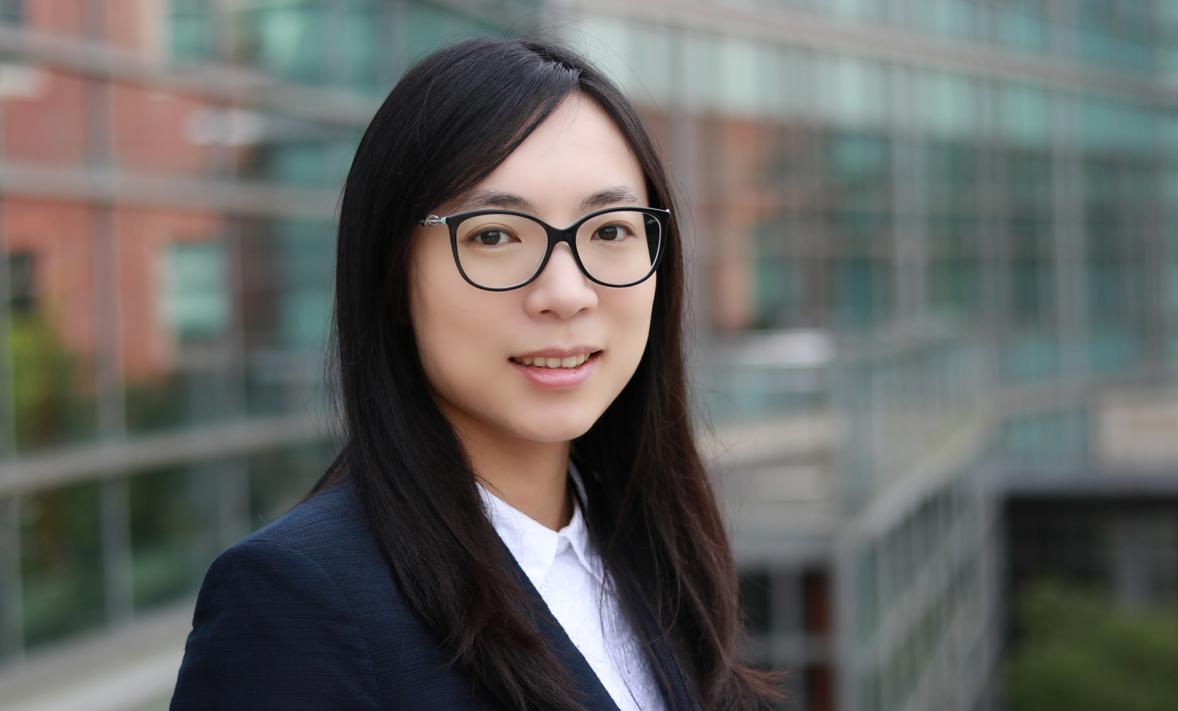

About Bo Li

|

Dr Bo Li is an associate professor in the Department of Computer Science at the University of Chicago. She is the recipient of the IJCAI Computers and Thought Award, Alfred P. Sloan Research Fellowship, AI’s 10 to Watch, NSF CAREER Award, MIT Technology Review TR-35 Award, Dean's Award for Excellence in Research, C.W. Gear Outstanding Faculty Award, Intel Rising Star award, Symantec Research Labs Fellowship, Rising Star Award, Research Awards from Tech companies such as Amazon, Meta, Google, Intel, IBM, and eBay, and best paper awards at several top machine learning and security conferences. Her research focuses on both theoretical and practical aspects of trustworthy machine learning, which is at the intersection of machine learning, security, privacy, and game theory. She has designed several scalable frameworks for certifiably robust learning and privacy-preserving data publishing. Her work has been featured by several major publications and media outlets, including Nature, Wired, Fortune, and New York Times. |

Read the research in full

DecodingTrust: A Comprehensive Assessment of Trustworthiness in GPT Models, Boxin Wang, Weixin Chen, Hengzhi Pei, Chulin Xie, Mintong Kang, Chenhui Zhang, Chejian Xu, Zidi Xiong, Ritik Dutta, Rylan Schaeffer, Sang T. Truong, Simran Arora, Mantas Mazeika, Dan Hendrycks, Zinan Lin, Yu Cheng, Sanmi Koyejo, Dawn Song, Bo Li.

The project page.

tags: NeurIPS, NeurIPS2023