ΑΙhub.org

#ICLR2024 invited talk: Priya Donti on why your work matters for climate more than you think

Image by Alan Warburton / © BBC / Better Images of AI / Nature / Licenced by CC-BY 4.0

Image by Alan Warburton / © BBC / Better Images of AI / Nature / Licenced by CC-BY 4.0

The Twelfth International Conference on Learning Representations (ICLR2024) took place from 7-11 May in Vienna. The program included workshops, contributed talks, affinity group events, and socials. There were also seven invited talks that covered a broad range of topics. In this post, we give a summary of the talk by Priya Donti.

Priya’s research focuses on machine learning for forecasting, optimization, and control in power grids. She is an Assistant Professor and the Silverman (1968) Family Career Development Professor at MIT. She is also co-founder and Chair of Climate Change AI, a global nonprofit initiative with the aim of “catalyzing impactful work at the intersection of climate change and machine learning”.

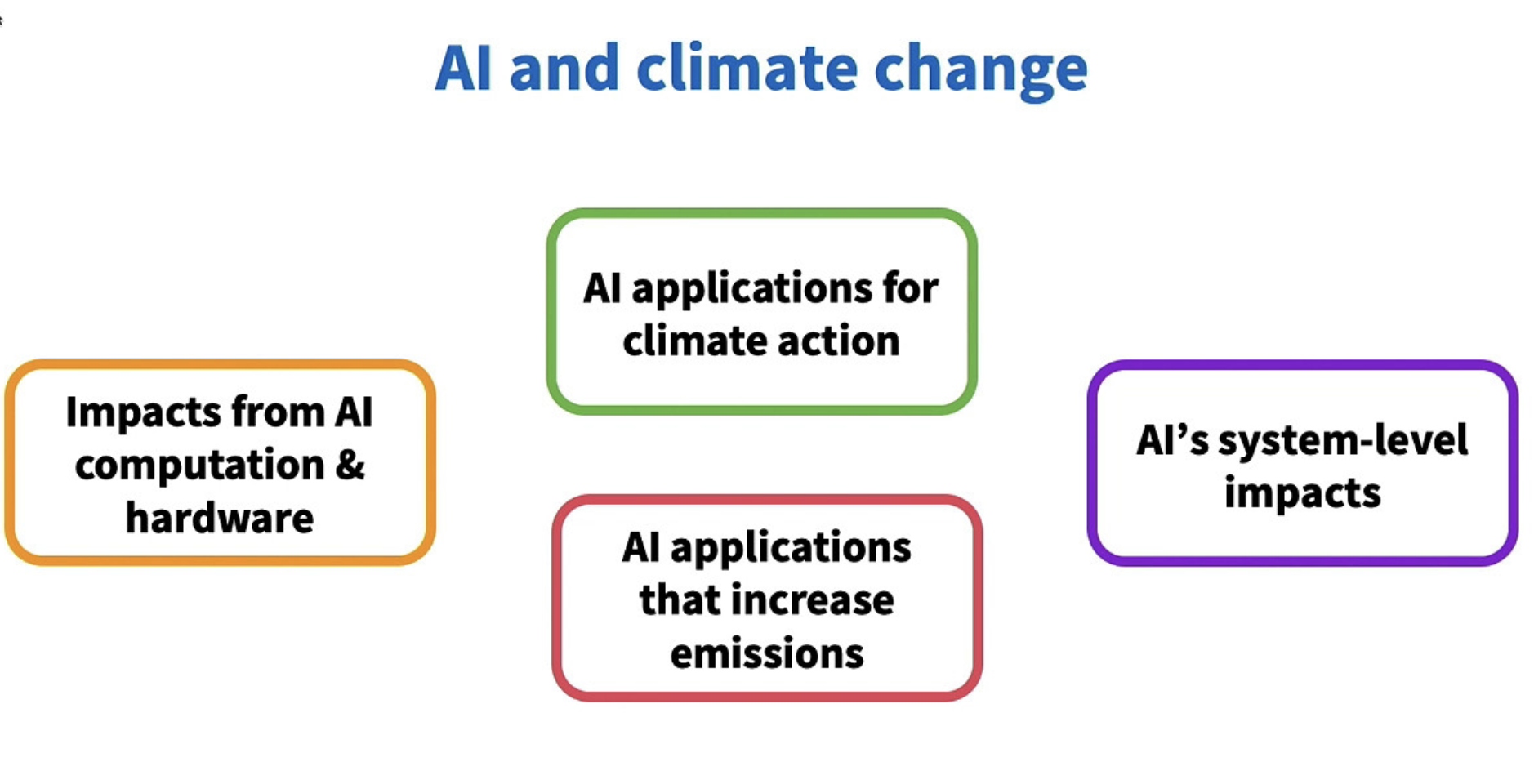

The relationship between climate and AI is multi-faceted, with the two are related in a number of ways. Priya’s presentation focussed on these links and gave some examples of how AI researchers could enhance the way they align their work with climate change-related goals.

Slide from Priya’s talk. The four key relationships between AI and climate change: 1) AI applications for climate, 2) Impacts from AI computation and hardware, 3) AI applications that increase emissions, 4) AI’s system-level impacts.

Slide from Priya’s talk. The four key relationships between AI and climate change: 1) AI applications for climate, 2) Impacts from AI computation and hardware, 3) AI applications that increase emissions, 4) AI’s system-level impacts.

AI applications for climate

Perhaps one of the most considered relationships between AI and climate change is the use of AI for applications to help tackle aspects of the climate crisis. A good place to start if you are interested in a comprehensive overview of the types of ways machine learning (ML) can be used in various sectors is the position paper, co-written by Priya, “Tackling climate change with machine learning”. Some of the recurring themes that came out of this work were that ML can be particularly useful for distilling raw data, improving predictions, optimising complex systems, accelerating scientific discovery, approximating time-intensive simulations, and data management.

Priya gave examples of how ML is being used for climate on each of these themes. To illustrate the point about improving predictions she talked about electric power grids, where operators are trying to integrate renewable energies, such as solar and wind. As the outputs of these sources vary depending on the weather conditions, it is challenging to maintain a steady flow of electricity in the grid. Therefore, ML is being used to help create nowcasts of the solar and wind power outputs by combining heterogeneous data streams, such as historical data, weather predictions, and imagery of clouds moving overhead.

Impacts from AI computation and hardware

The flip side to AI techniques being used to accelerate climate solutions is that AI systems themselves can have a significant impact on the environment. These impacts fall into two main categories: 1) the operational impacts from energy and water consumed during consumption, and 2) the emissions and materials impacts from production, transportation and disposal of the hardware.

In terms of the operational impacts, these occur both during the training and development phase of an algorithm and during each use of the model that follows. Although each use (or inference) of the model is much less computationally expensive than training, these uses happen much more frequently. Priya said that we should definitely be keeping track of the ratio of power use for training vs inference. She also pointed to research that shows that multi-purposed models can be orders of magnitude more expensive per inference than task-specific models.

AI applications that increase emissions

Whilst the first two relationships outlined above are the two which we principally hear about through the media, with AI either being touted as a climate saviour or destroyer (or a bit of both), the picture is more expansive than that. AI is actually also being used to accelerate emission-intensive industries. For example, there are researchers working with oil and gas companies using ML techniques in the search and extraction of these resources. This has boosted production levels of oil and has and could yield billions for the sector by 2025. This work has slowed the transition to renewable energy sources. Priya also cited the example of the “Internet of Cows” which utilises ML to monitor dairy production. The bovine industry is a huge contributor to greenhouse gases and habitat destruction around the world.

AI’s system-level impacts

There are many projects that the community is working on that they don’t automatically think of as climate relevant, but they are. There are things that can be done to better align these projects to climate goals. Research that pertains to autonomous vehicles and ride-sharing is a good example. It’s actually unclear whether this work overall will be good or bad for the climate. On the one hand, the work could focus on ways that help us to facilitate public transportation and to make connections between different modes of transport. However, on the other hand, it could centre on entrenching the role of private transportation and, potentially, fossil-fuelled transport.

How can the community help?

In the second part of her presentation, Priya described some of the ways that the AI community can get more involved in climate work, citing four general areas:

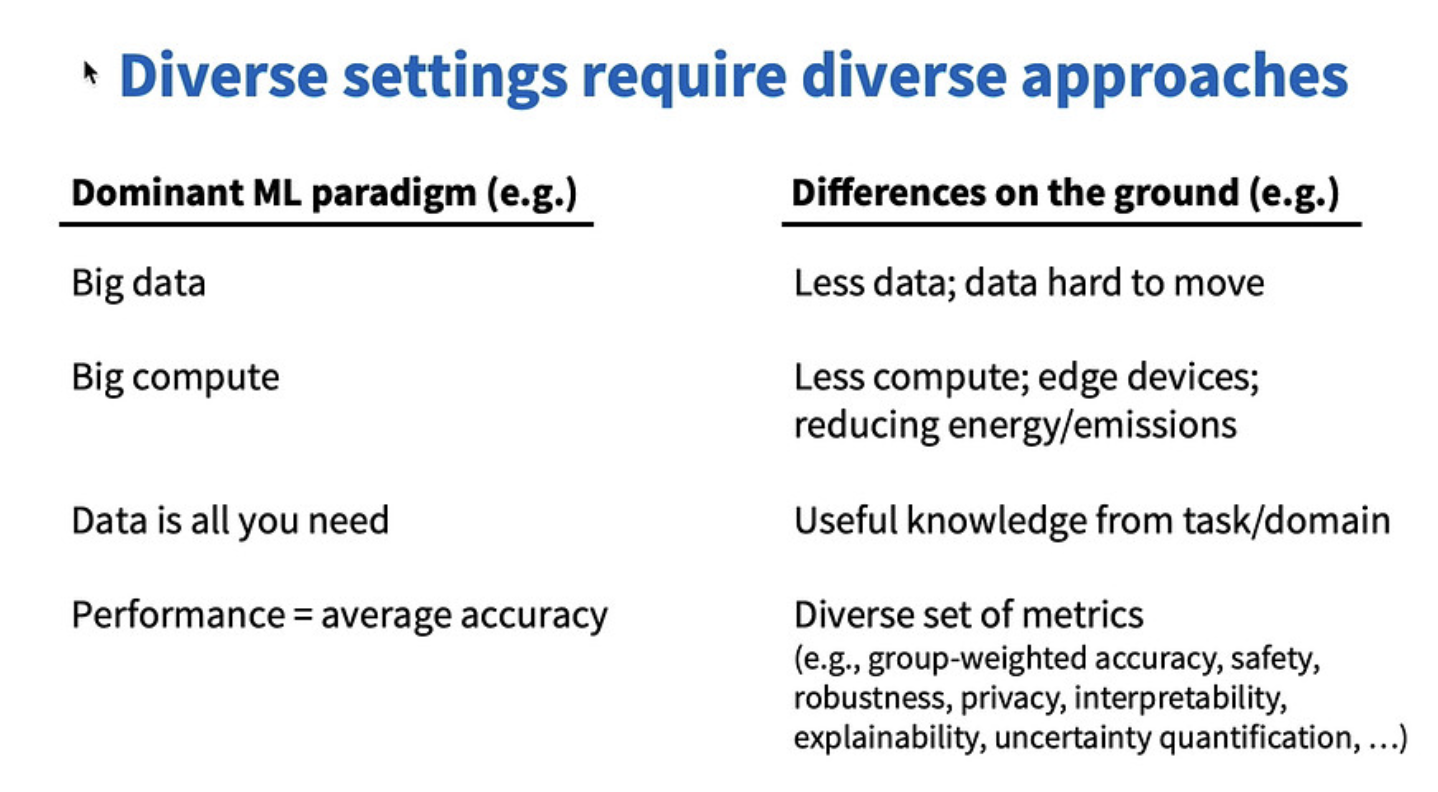

- Methodological innovation. When it comes to climate research, the landscape is very diverse, and to successfully tackle problems, a diverse set of approaches is required. This means that some of the assumptions that are made in the dominant AI paradigm (big data, big compute, data is all you need etc) don’t hold in climate scenarios. Priya urged the community to consider a diverse set of approaches when carrying out their research. There are many interesting methods to consider, including physics-informed ML, safe and robust ML, interpretable ML, uncertainty quantification, causality, energy efficient ML, TinyML, and AutoML.

- Applications. The most direct way to contribute here is obviously to work on a climate-relevant application. At the very least, researchers should avoid work that clearly counters climate goals. There are also other areas, as Priya mentioned earlier in her talk, that do impact on climate even if they are not obviously related. Researchers can think about shifting the emphasis of the work to make it more climate-friendly. For example, on-demand delivery generally leads to increased consumption. However, researchers could change the focus of their work to think about fuel efficiency or shipment bundling.

- Practices. In terms of practices, there are a few things that researchers can get involved in. They can advocate for organisational policies, such as internal carbon pricing and transparency of reporting and impact assessments. Researchers can also come together to think about ways they could align their “business as usual” work with climate goals.

- Public communication. Priya noted that there is public excitement for AI, but many people lack a mental model of what AI actually is and what it can and can’t do, and this has real implications. She urged researchers to communicate about their work with both the community and the general public in mind. There should be transparency about the strengths, limitations and risks of AI systems. We should also highlight the diversity of methods and perspectives that exist.

Slide from Priya’s talk. Diverse settings require diverse approaches.

Slide from Priya’s talk. Diverse settings require diverse approaches.

If you are interested in finding out more about Climate Change AI, you can visit their website here. They also held a workshop at ICLR which was livestreamed and is available to watch on demand here.

tags: ICLR2024