ΑΙhub.org

Interview with Shaghayegh (Shirley) Shajarian: Applying generative AI to computer networks

In this interview series, we’re meeting some of the AAAI/SIGAI Doctoral Consortium participants to find out more about their research. This time, we hear from Shaghayegh (Shirley) Shajarian and learn about her research applying generative AI to computer networks.

Tell us a bit about your PhD – where are you studying, and what is the topic of your research?

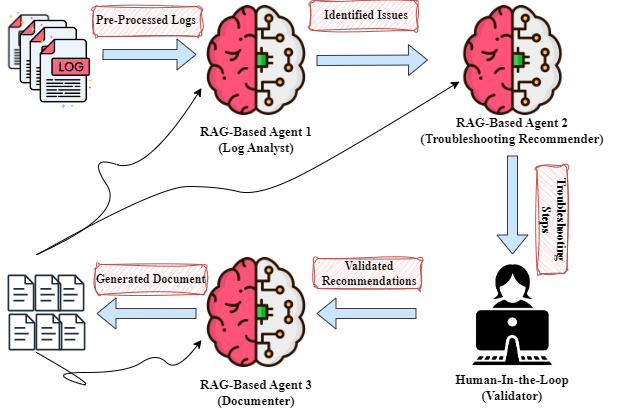

I am a third-year PhD student in the Computer Science department at North Carolina A&T State University, working under Dr Sajad Khorsandroo and Dr Mahmoud Abdelsalam. I am part of the Autonomous Cybersecurity and Resilience Lab, where my research focuses on applying generative AI to computer networks. I am developing AI-driven agents that assist with some network operations, such as log analysis, troubleshooting, and documentation. The goal is to reduce the manual work that network teams deal with every day and move toward more autonomous, self-running networks.

Could you give us an overview of the research you’ve carried out so far during your PhD?

My research is driven by the vision of self-running networks, systems capable of autonomously configuring, optimizing, healing, and protecting themselves. This led me to research the application of generative AI to automate some key aspects of network management operations and reduce human intervention. In addition to my primary focus on autonomous network management, I am also interested in network cybersecurity and have published two studies exploring machine learning techniques in cybersecurity, with the result of two journal papers, one on classifying malicious domains using transfer learning and another surveying explainable AI (XAI) for malware analysis.

Is there an aspect of your research that has been particularly interesting?

What I find most fascinating is the potential of LLMs to act as intelligent network agents in the face of growing complexity in modern computer networks. Managing these systems manually is becoming costly and unsustainable. Leveraging LLMs to interpret logs, identify issues, and communicate their findings through human-like conversations offers a powerful way to support network operators. This also opens the door to semi-autonomous networks, where LLM-driven agents handle routine tasks while humans remain in the loop to verify, adjust, or override outputs. And ultimately, it brings us closer to the vision of fully autonomous networks that can operate, adapt, and respond without human intervention.

What are your plans for building on your research so far during the PhD – what aspects will you be investigating next?

I research how emerging techniques in Generative AI can enhance the autonomy in computer networks. Specifically, I am expanding my study by leveraging real-world telemetry and network logs to improve situational awareness and support more effective decision-making in dynamic network environments. Moving forward, I will explore the deployment of LLM-based agents in real-world environments, focusing on their reliability and ability to adapt to changing network conditions. I will also evaluate how these systems can autonomously identify, diagnose, and document network issues while ensuring human-in-the-loop oversight for critical decision-making.

What made you want to study AI, and the area of LLMs?

During my undergraduate and master’s studies in computer software engineering, I became interested in how machine learning models human reasoning. The rise of LLMs increased my curiosity about using them in complex domains like computer networks. I think their ability to support autonomy in systems is important for reducing the burden on operators and limiting the need for manual intervention.

What advice would you give to someone thinking of doing a PhD in the field?

I want to say that being flexible, curious, and up to date is essential. A PhD in a field that combines AI and computer networks requires depth in both areas, and balancing both fields takes discipline and focus, since you need to understand and apply ideas from different technical foundations. So, if you enjoy exploring problems and building solutions, this path brings value.

Also, I believe choosing the right advisor and a good research topic is key. The advisor sets the direction, pace, and standards of your work. I am so fortunate to have two amazing advisors to support me in this way. A topic that aligns with your interests helps you stay focused through the hard phases of research. Do not pursue a PhD for the degree itself; do it because you want to ask questions, find answers, and create something that adds to the field.

Could you tell us an interesting (non-AI related) fact about you?

Certainly. I am a very passionate cook, and if I were not a computer scientist, I would likely be pursuing a career as a chef, ideally working toward earning a Michelin star. Cooking is my creative outlet and one of my favorite ways to connect with others.

About Shirley

|

Shaghayegh (Shirley) Shajarian is a PhD Student in Computer Science at North Carolina A&T State University. Her research centers on autonomous network management using Generative AI. She holds a B.S. and M.S. in Computer Software Engineering, and her work has been presented at leading AI and computer networks conferences, including AAAI and CoNEXT. Beyond research, she is passionate about teaching, mentoring, and building AI systems that can operate effectively in complex, real-world environments. |

tags: AAAI, AAAI Doctoral Consortium, AAAI2025, ACM SIGAI