ΑΙhub.org

AI chatbots can effectively sway voters – in either direction

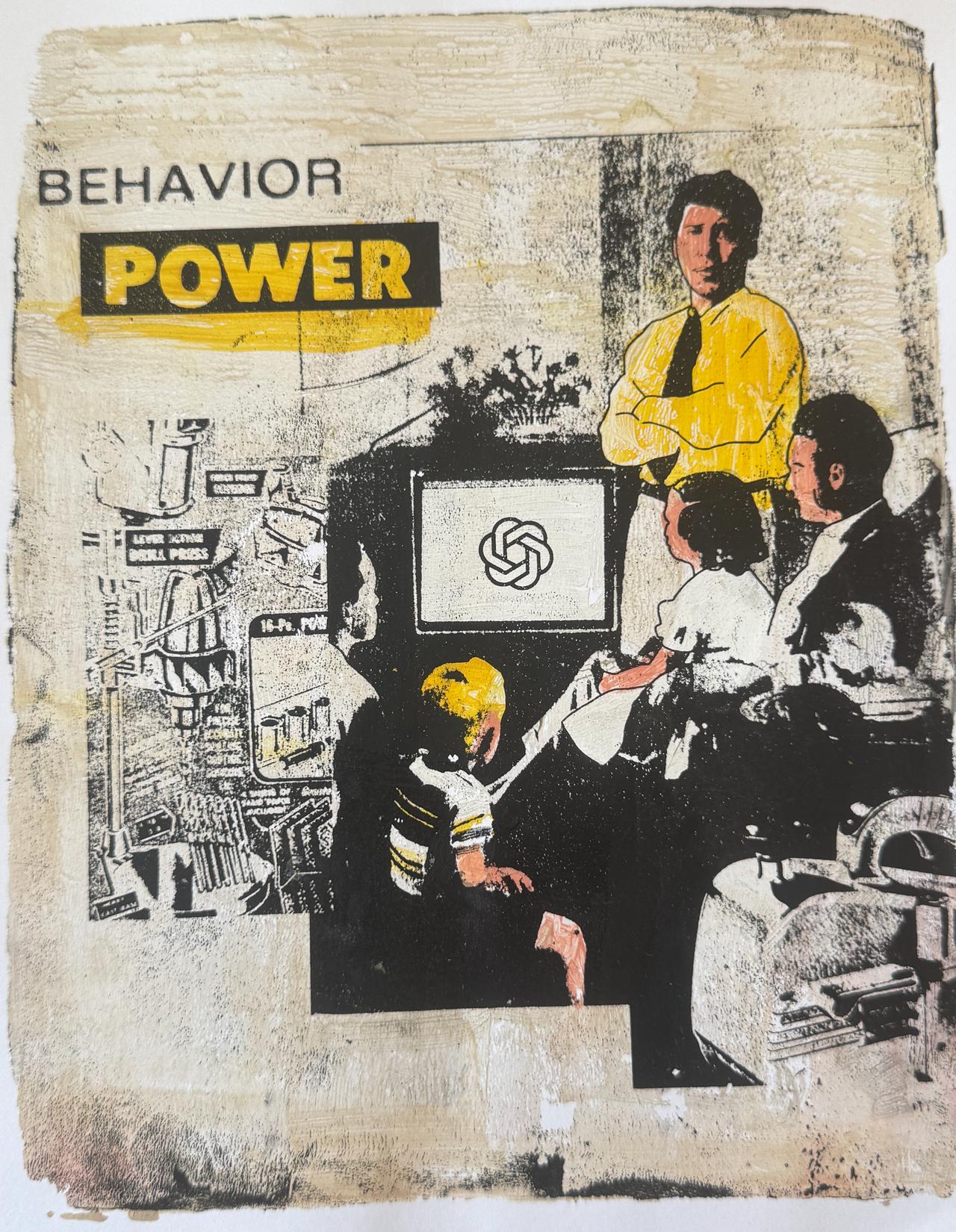

Bart Fish & Power Tools of AI / Behaviour Power / Licenced by CC-BY 4.0

Bart Fish & Power Tools of AI / Behaviour Power / Licenced by CC-BY 4.0

By Patricia Waldron

The potential for artificial intelligence to affect election results is a major public concern. Two new papers – with experiments conducted in four countries – demonstrate that chatbots powered by large language models (LLMs) are quite effective at political persuasion, moving opposition voters’ preferences by 10 percentage points or more in many cases. The LLMs’ persuasiveness comes not from being masters of psychological manipulation, but because they come up with so many claims supporting their arguments for candidates’ policy positions.

“LLMs can really move people’s attitudes towards presidential candidates and policies, and they do it by providing many factual claims that support their side,” said David Rand, a senior author on both papers. “But those claims aren’t necessarily accurate – and even arguments built on accurate claims can still mislead by omission.”

The researchers reported these findings in two papers published simultaneously, “Persuading Voters Using Human-Artificial Intelligence Dialogues”, in Nature, and “The Levers of Political Persuasion with Conversational Artificial Intelligence”, in Science.

In the Nature study, Rand, along with co-senior author Gordon Pennycook and colleagues, instructed AI chatbots to change voters’ attitudes regarding presidential candidates. They randomly assigned participants to engage in a back-and-forth text conversation with a chatbot promoting one side or the other and then measured any change in the participants’ opinions and voting intentions. The researchers repeated this experiment three times: in the 2024 U.S. presidential election, the 2025 Canadian federal election and the 2025 Polish presidential election.

They found that two months before the U.S. election, among more than 2,300 Americans, chatbots focused on the candidates’ policies caused a modest shift in opinions. On a 100-point scale, the pro-Harris AI model moved likely Trump voters 3.9 points toward Harris – an effect roughly four times larger than traditional ads tested during the 2016 and 2020 elections. The pro-Trump AI model moved likely Harris voters 1.51 points toward Trump.

In similar experiments with 1,530 Canadians and 2,118 Poles, the effect was much larger: Chatbots moved opposition voters’ attitudes and voting intentions by about 10 percentage points. “This was a shockingly large effect to me, especially in the context of presidential politics,” Rand said.

Chatbots used multiple persuasion tactics, but being polite and providing evidence were most common. When researchers prevented the model from using facts, it became far less persuasive – showing the central role that fact-based claims play in AI persuasion.

The researchers also fact-checked the chatbot’s arguments using an AI model that was validated using professional human fact-checkers. While on average the claims were mostly accurate, chatbots instructed to stump for right-leaning candidates made more inaccurate claims than those advocating for left-leaning candidates in all three countries. This finding – which was validated using politically balanced groups of laypeople – mirrors the often-replicated finding that social media users on the right share more inaccurate information than users on the left, Pennycook said.

In the Science paper, Rand collaborated with colleagues at the UK AI Security Institute to investigate what makes these chatbots so persuasive. They measured the shifts in opinions of almost 77,000 participants from the U.K. who engaged with chatbots on more than 700 political issues.

“Bigger models are more persuasive, but the most effective way to boost persuasiveness was instructing the models to pack their arguments with as many facts as possible, and giving the models additional training focused on increasing persuasiveness,” Rand said.“The most persuasion-optimized model shifted opposition voters by a striking 25 percentage points.”

This study also showed that the more persuasive a model was, the less accurate the information it provided. Rand suspects that as the chatbot is pushed to provide more and more factual claims, eventually it runs out of accurate information and starts fabricating.

The discovery that factual claims are key to an AI model’s persuasiveness is further supported by a third recent paper in PNAS Nexus by Rand, Pennycook and colleagues. The study showed that arguments from AI chatbots reduced belief in conspiracy theories even when people thought they were talking to a human expert. This suggests it was the compelling messages that worked, not a belief in the authority of AI.

In both studies, all participants were told they were conversing with an AI and were fully debriefed afterward. Additionally, the direction of persuasion was randomized so the experiments would not shift opinions overall.

Studying AI persuasion is essential to anticipate and mitigate misuse, the researchers said. By testing these systems in controlled, transparent experiments, they hope to inform ethical guidelines and policy discussions about how AI should and should not be used in political communication.

Rand also points out that chatbots can only be effective persuasion tools if people engage with the bots in the first place – a high bar to clear.

But there’s little question that AI chatbots will be an increasingly important part of political campaigning, Rand said. “The challenge now is finding ways to limit the harm – and to help people recognize and resist AI persuasion.”