ΑΙhub.org

Scaling up multi-agent systems: an interview with Minghong Geng

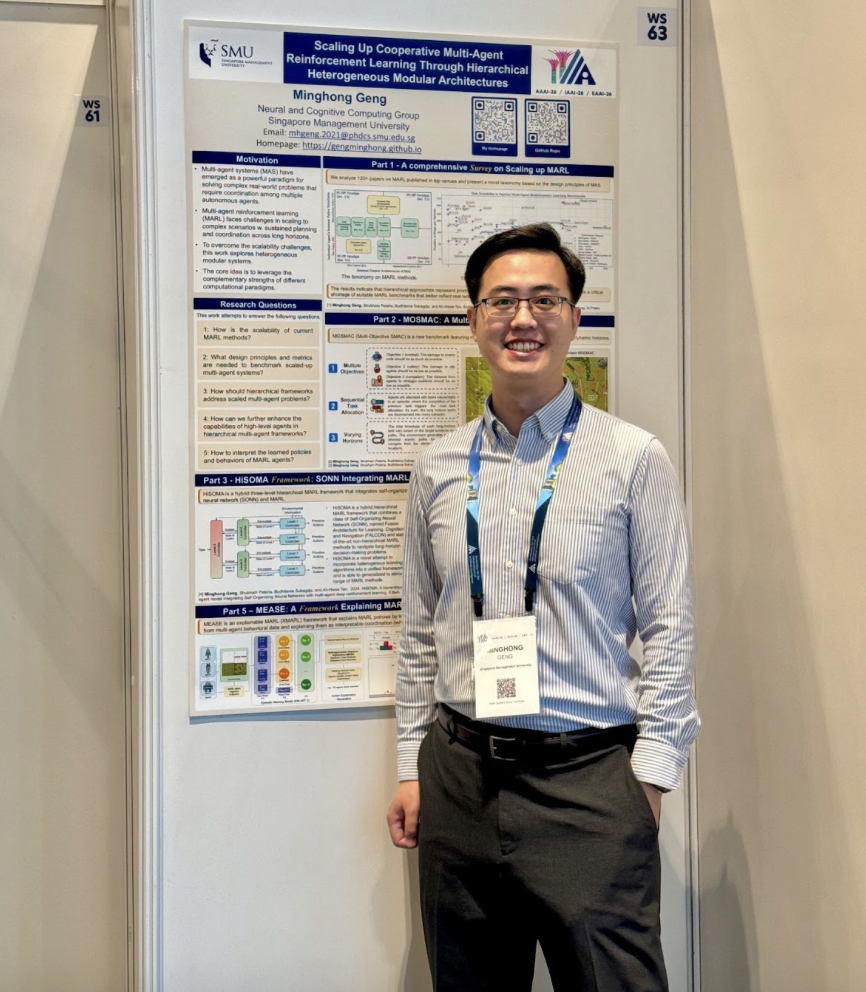

In this interview series, we’re meeting some of the AAAI/SIGAI Doctoral Consortium participants to find out more about their research. Minghong Geng recently completed his PhD and is now working as a postdoctoral researcher at Singapore Management University. We sat down to discuss his research on multi-agent systems.

Firstly, congratulations on completing your PhD! What is the general topic of your research?

I work on multi-agent systems. The research problem I’m looking at is scaling up multi-agent systems. That could be to include more agents in the system or to apply the existing systems to larger-scale problems, for example, longer-horizon problems.

Could you talk about some of the projects you carried out during your PhD?

My PhD research unfolded across three main directions. Early on, I focused on developing hierarchical methods to scale up multi-agent systems. The core idea was a divide-and-conquer approach: a high-level agent decomposes a complex problem and delegates subtasks to low-level modules, making otherwise intractable problems more manageable. Our experiments confirmed that this hierarchical organization outperformed standard baselines — and it was encouraging to later see similar ideas emerging in the language model community, where multiple agents are assigned distinct roles to decompose and solve problems collaboratively. This line of thinking led to HiSOMA, where we introduced a three-level hierarchical architecture that combines a self-organizing neural network at the higher level with multiple MARL agent teams at the lower level. We also presented a detailed survey on the landscape of MARL research and provided a MARL benchmark named MOSMAC.

Building on that foundation, I then explored integrating large language models (LLMs) into multi-agent systems. The motivation was straightforward: different components have complementary strengths. LLMs excel at holistic environment understanding — they can process rich textual descriptions or visual inputs to reason about high-level goals. Once an LLM identifies a suitable subtask, a more computationally efficient RL policy can execute it. We demonstrated that this hybrid hierarchical design consistently outperformed purely RL-based baselines. This method was presented in our L2M2 paper.

The third direction I pursued was explainability in MARL. After investing significant effort in training a multi-agent system, a natural question arises: what has it actually learned? To answer this, we collected agent trajectories and applied episodic memory models to distill a large set of behavioral traces into a compact set of interpretable strategies. Importantly, we also showed that this distilled knowledge could be fed back into the training process to guide and improve agent learning. This study was reported in our MEASE paper and recently accepted to the AAMAS 2026 conference.

You talked about dealing with long-horizon problems. Could you give us an example of the types of problems you were looking at?

The motivation came from a gap between how MARL systems are typically evaluated and how intelligent agents would need to operate in the real world. In most standard benchmarks, agents interact with a relatively contained environment for a few hundred steps before the episode ends. In reality, however, an autonomous agent may need to operate continuously over days, weeks, or even years — maintaining stable, effective behavior throughout. That disconnect is what drove my interest in long-horizon problems.

A natural starting point was SMAC (StarCraft Multi-Agent Challenge), one of the most widely used benchmarks in the MARL community since its publication in 2019. Despite its popularity, we identified a key limitation: its time horizon is very short, typically less than a hundred steps. We wanted to understand what would happen when agents were required to operate over hundreds or thousands of steps instead.

This question motivated the development of MOSMAC, a new benchmark featuring sequential, multi-objective navigation tasks set across complex terrains — including high ground, low ground, and ramps — that require agents to sustain coordinated behavior over extended horizons. While these tasks are intuitive for humans to reason about, existing MARL algorithms struggled significantly. A recurring failure mode was agents becoming trapped in corners or dead ends, repeating the same ineffective behavior across multiple runs. This revealed a fundamental limitation: without the ability to plan over long horizons, agents lack the strategic awareness needed to navigate complex environments.

These observations directly inspired the hierarchical approach I described earlier. If agents could not handle the full complexity of the terrain, the natural solution was to decompose the problem — having a high-level agent assign intermediate navigation targets for lower-level agents to execute. This insight underpinned both the HiSOMA framework and, subsequently, the L2M2 framework, which further integrated large language models into the hierarchical design.

Are you continuing in the same line of research now as a postdoctoral researcher?

Yes, though with a shift in emphasis. While my PhD was primarily focused on developing hierarchical multi-agent architectures, I am now turning my attention to understanding what trained models have actually learned. Concretely, the questions I am pursuing are: what strategies can be extracted from learned policies, how can that knowledge be used to further improve the training process, and how can it help agents avoid repeating past mistakes? Explainability in Multi-Agent Reinforcement Learning (MARL) is a necessary, functional component for boosting the transparency and dependability of these systems.

Alongside this, I am developing a longer-term research vision centered on building a unified intelligence composed of heterogeneous modules, where each module is itself a multi-agent system. The key insight is that different paradigms — large language models, RL policies, self-organizing neural networks — have complementary strengths and weaknesses. LLMs are powerful reasoners but computationally expensive; RL policies are lightweight and efficient but require careful integration with other components. Rather than committing to any single paradigm, I want to design a system that dynamically draws on whichever module is best suited to the task at hand. The goal is a more capable and flexible intelligence that can tackle problems beyond the reach of any individual approach.

I’m interested in what made you want to study AI originally. What inspired you to go into the field?

My path into AI was anything but linear. As an undergraduate, I studied finance, with every expectation of pursuing a career in banking or financial services. After graduating, I did exactly that — interning at several well-regarded finance firms and working alongside talented people in the industry. But that experience also revealed something unexpected: I found myself increasingly drawn to the mathematical and computational tools underlying financial problems, and I began to wonder whether those tools could open doors to something new.

That realization prompted me to pursue a master’s program at Singapore Management University, which offered an interdisciplinary curriculum spanning finance, computer science, the Internet of Things, and more. The flexibility of the program gave me the space to explore, and I found myself gravitating almost entirely toward AI — or what was more commonly called machine learning and deep learning at the time.

The turning point came at the end of my master’s studies. I was introduced to my current supervisor, Professor TAN Ah-Hwee, and I joined his research group in Dec 2020 as a research engineer. Within eight months, I had transitioned into the PhD program. Looking back, I am genuinely grateful for the encouragement I received at that stage, as I had little prior research experience and the guidance made an enormous difference. It was a gradual but deliberate journey from finance undergraduate to AI researcher, and one I find deeply rewarding.

That’s interesting to hear how you changed fields. For our final question, could you tell us an interesting fact about you?

During my undergraduate years, I hosted a music podcast for several years — essentially serving as a DJ, curator, and scriptwriter all at once. It was a surprisingly demanding commitment: every week we had to research artists, curate playlists, write scripts, and produce the show. Looking back, it taught me a great deal about discipline, communication, and the importance of presenting ideas in an engaging way — skills that, perhaps more than I expected, have carried over into academic life. It was also simply a lot of fun, and a great reminder that curiosity and passion can take you in unexpected directions.

About Minghong

|

Minghong Geng is a Research Fellow at Singapore Management University, where his research focuses on multi-agent systems and multi-agent reinforcement learning. He received his PhD in January 2026. During his doctoral studies, he developed hierarchical frameworks and benchmarking tools to enable effective cooperation among large agent teams on long-horizon tasks. Minghong received SMU Full PhD Scholarship, and was awarded SMU Presidential Doctoral Fellow (2025), IJCAI volunteer grant and travel grant (2025), AAMAS student scholarship (2024-2025). His work has been published in venues including AAMAS (2023-2026), IJCAI (2025), and ESwA. He is an active reviewer for AAMAS, AAAI, ICML, and ICLR conferences. |

tags: AAAI, AAAI Doctoral Consortium, AAAI2026, ACM SIGAI