ΑΙhub.org

Formal verification for safety evaluation of autonomous vehicles: an interview with Abdelrahman Sayed Sayed

In this interview series, we’re meeting some of the AAAI/SIGAI Doctoral Consortium participants to find out more about their research. We sat down with Abdelrahman Sayed Sayed to chat about his work on formal verification applied to autonomous vehicles.

Could you tell us a bit about where you’re studying and the broad topic of your research?

I’m a Marie Skłodowska-Curie PhD Fellow at Université Gustave Eiffel in France. My PhD topic is formal verification of neural ODE (ordinary differential equations) for safety evaluation in autonomous vehicles. It’s a bit of a long title! It’s an interdisciplinary PhD at the intersection of continuous AI models, formal methods, and autonomous systems.

Here in France, I’m doing mostly the theoretical work, and I’m also currently doing a research stay at the Department of Marine Technology in Trondheim, Norway, where I’m reflecting on the theoretical findings from the first two years of my PhD, and applying them to autonomous vehicles in marine and maritime domains.

Could you say something about formal verification and why it’s such an important topic?

Actually, the main motive for pursuing my PhD research and formal verification of neural ODE is because I personally don’t trust AI perception models or AI-controlled applications. However, if we have a formal guarantee that our model is going to behave in a specific way, or within specific control values, we can have some trust. From my interactions with industrial partners and people who are operating robots in safety-critical applications, none of them are willing to implement even small AI functions because their applications interact with human beings or critical infrastructure. So introducing formal verification to such AI-based components can give them some guarantees. It can also help them abide by the EU (or other) regulations covering how such kinds of models can interact with human beings and so on.

I understand that your PhD so far has comprised three different research projects, or directions. Could you tell us about the first one?

As I mentioned, my PhD is interdisciplinary, touching several communities – from AI to control and formal methods to applications in autonomous vehicles. The first research direction was about creating formal relations between discrete and continuous neural models. I investigated the close relationship between neural ODE (which are kind of continuous AI models or functions) and a residual network, which is a discrete model.

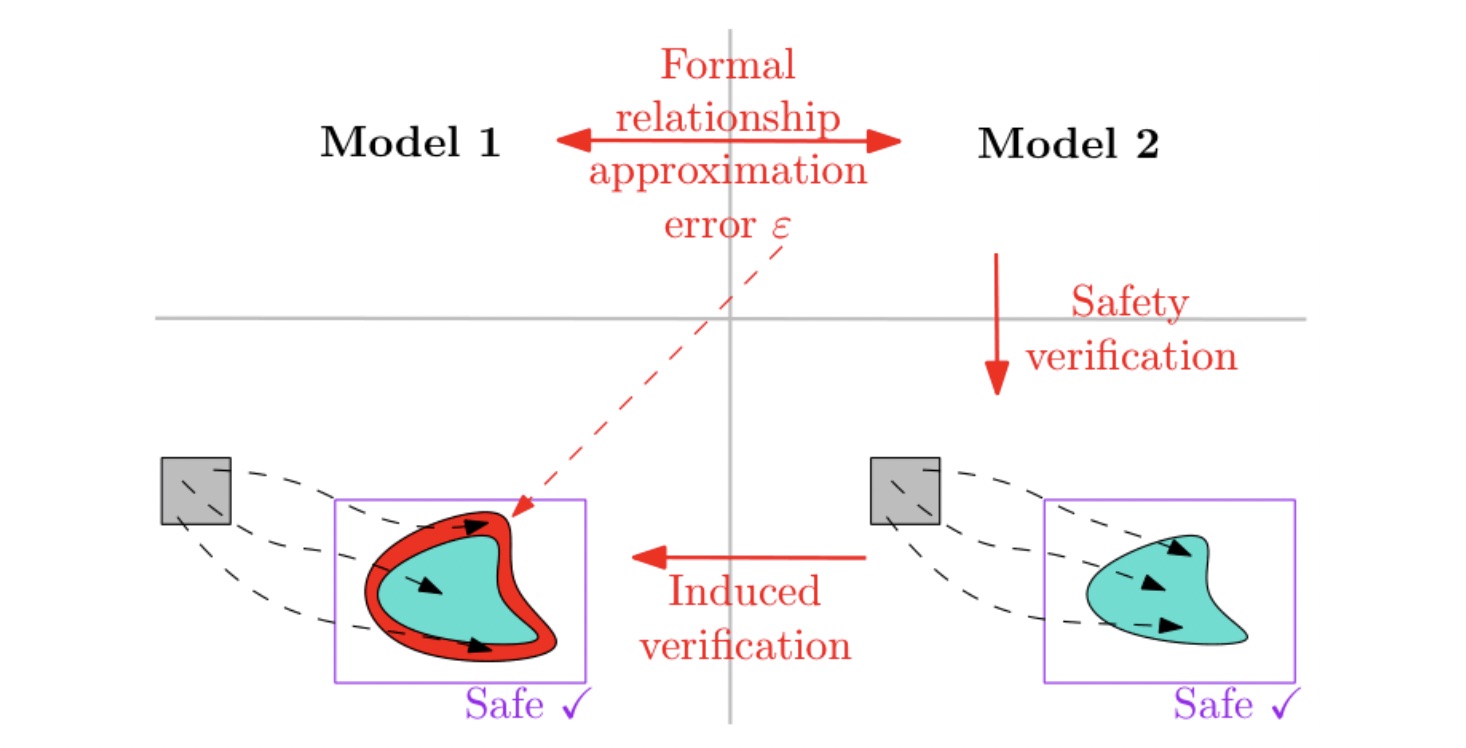

Neural ODE is actually a recent model, introduced in 2018, and there has been quite a lot of interest in it in both the control and AI communities because it can handle time series data. So it’s opening the door for real-world applications. But still the link between neural ODE and other discrete models was kind of vague. So in our work, the main idea was to establish a formal relationship between these two models by bounding the approximation error between them. The second thing we did was to construct a verification proxy, meaning that if we verify one model and we have guarantees on it, we can reflect it on the other models without the need to verify the other model as well. And that was an idea that stemmed mainly because of the lack of verification-based analysis tools for neural ODE. So if we manage to verify a neural network model, which we have some tools to do, we can reflect this on a neural ODE model.

Illustration of the proposed framework to verify Model 1 based on the outcome of the verification of Model 2 and a bound ε on the maximal error between the models.

Illustration of the proposed framework to verify Model 1 based on the outcome of the verification of Model 2 and a bound ε on the maximal error between the models.

Interesting! What did you move on to investigate for the second research direction?

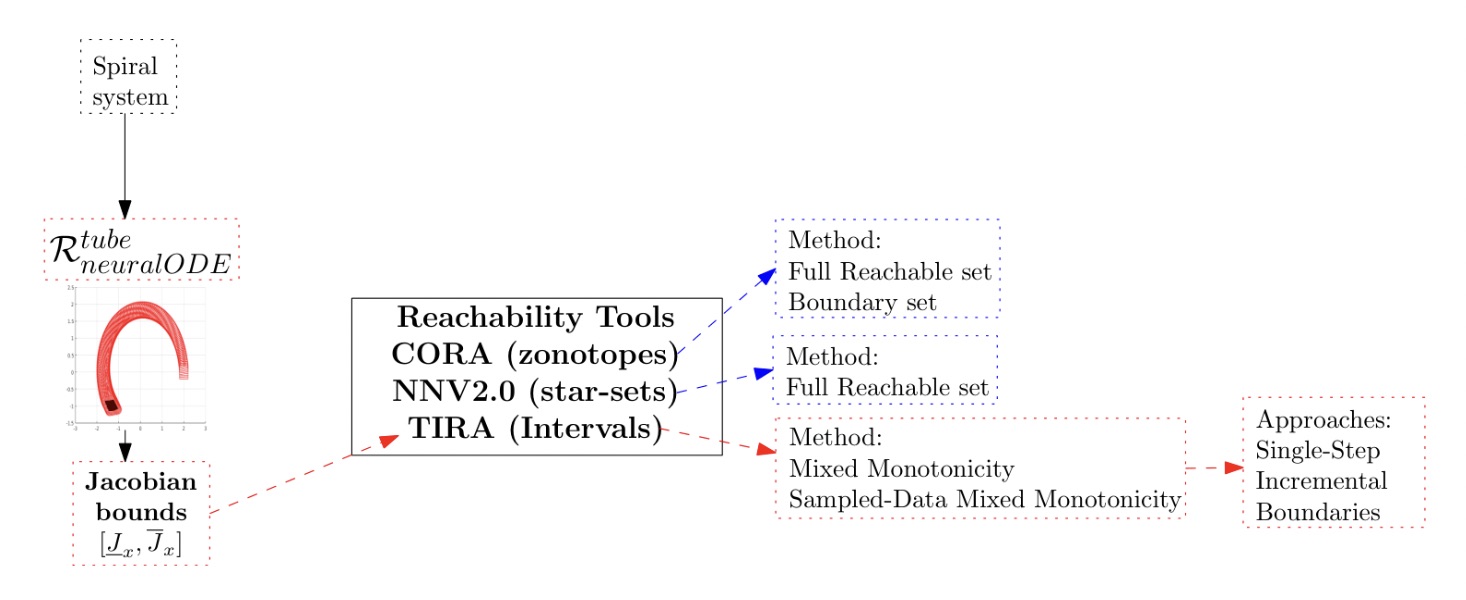

The current available tools that can handle neural ODE models are computationally expensive because they depend on set representations with three to five dimensions, maybe more. So in this research, which deals with introducing new reachability analysis for neural ODE methods, we proposed a neural ODE interval-based reachability analysis method based on extending the mixed monotonicity methods for continuous time dynamical systems. Such methods were originally introduced by my main PhD supervisor Pierre-Jean Meyer, but for continuous time dynamical systems. So, I extended this work for neural ODE and continuous time neural networks. And our methods turned out to be lightweight methods, which opens the door for real-world applications or real-world verification. We are still far away from that because we are still at the stage where we are providing offline guarantees before the operation of the system or the vehicle. But hopefully in the upcoming years we’ll be able to do this online.

Illustration of the steps for reachability analysis of neural ODE using different tools, methods and mixed monotonicity approaches

Illustration of the steps for reachability analysis of neural ODE using different tools, methods and mixed monotonicity approaches

So what has been the third, and most recent, research direction?

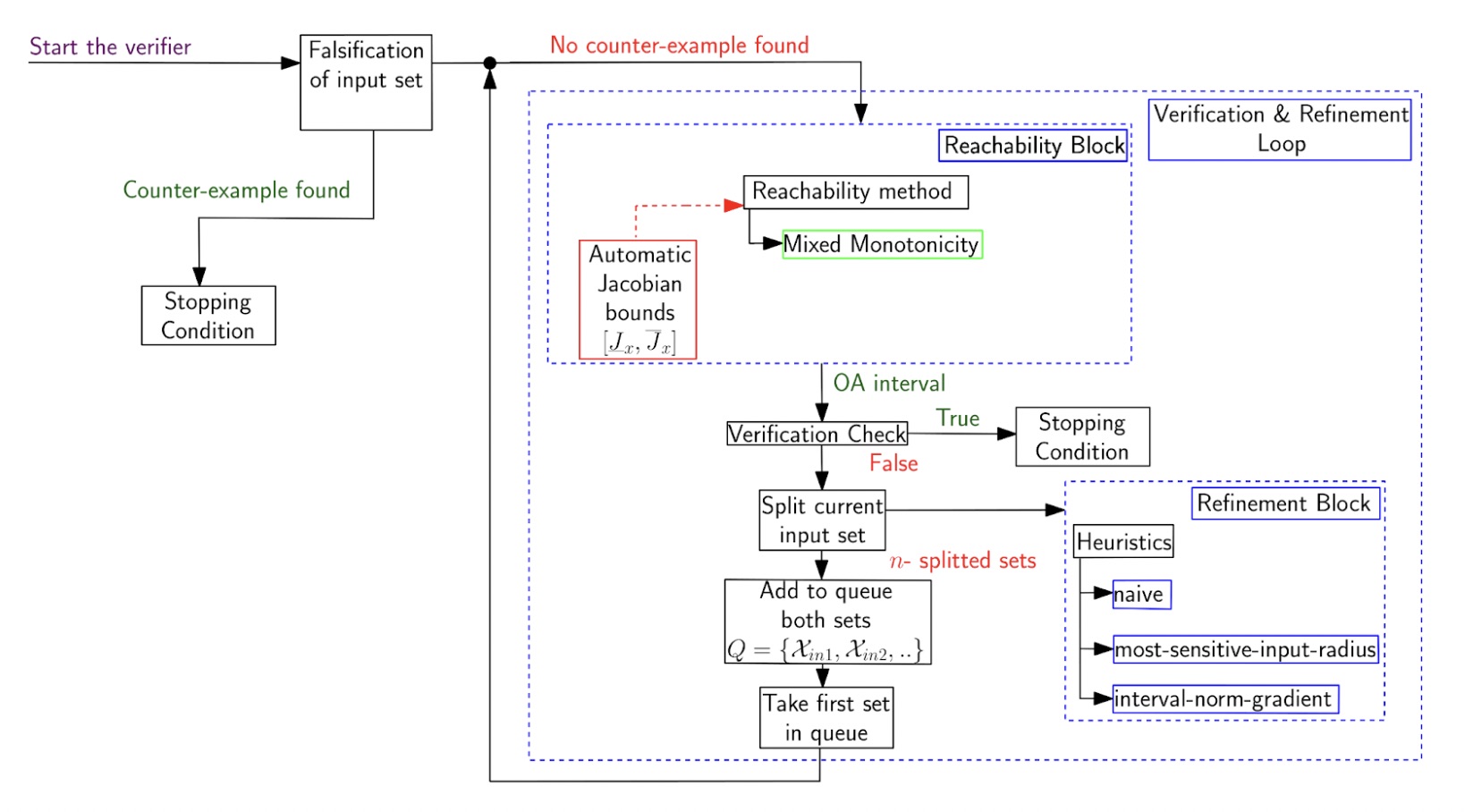

This third direction was about creating a full neural ODE verifier or verification toolbox. Here I extended the results from our method in the previous direction into a full neural ODE verifier to check specific safety properties on the neural ODE models. The general architecture of it is: first we do a falsification check for the input set in case we find a counterexample. If we find a counterexample, we conclude that this is going to violate our safety properties or system specification. However, if we don’t manage to find any counterexamples, we go to the verification and refinement loop. We try to split the input set into smaller subsets and verify each part of them and then take the union of them. If we don’t manage to find the safe reachable set of the system within a certain time frame, we conclude that we timed out. The system may be safe or may not be safe from those specific regions. The operator then knows which regions are safe and which regions may not be safe (due to uncertainty). These unsafe regions can then be avoided.

Neural ODE Verifier Architecture

Neural ODE Verifier Architecture

Could you tell us some more about the applications?

My lab is located in the north of France and specializes in guided railway vehicles and automatic metro lines. A fun fact is that Lille had the world’s first automatic metro line. So applications related to the metro and trains include real-time monitoring, detection and classification of hazards along the railway corridors, and which depends on AI models to classify specific regions of interest. We think that verifying these classification models using neural ODE could be interesting. I managed to find some railway corridor datasets that have labels for interesting stuff, both from images and from sensor collected data.

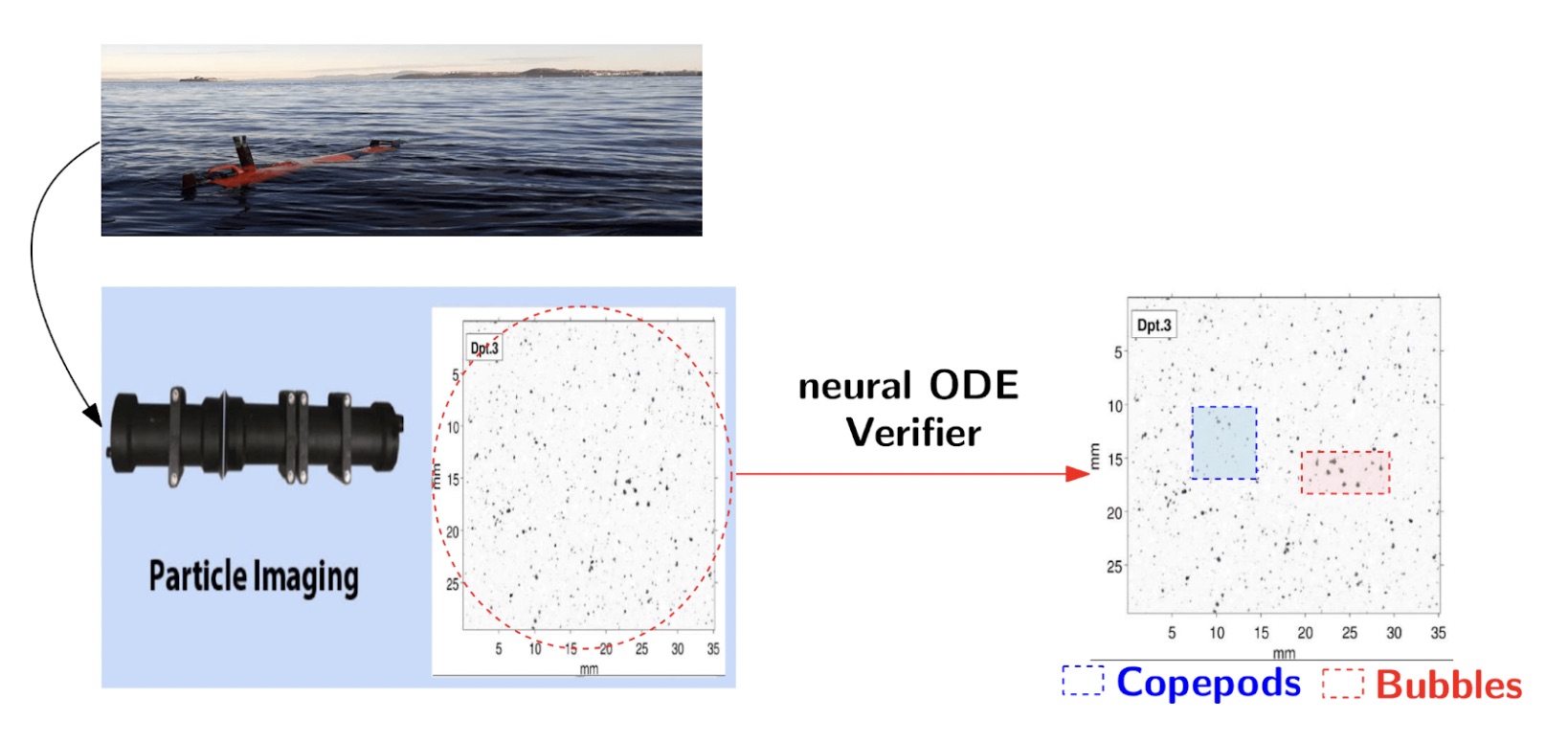

The other kind of applications are related to the marine environment. I’m part of a project in Norway, at the Department of Marine Technology, called SFI Harvest, which is monitoring underwater zooplankton and some microorganisms using autonomous underwater vehicles and a particle imager. So there were a couple of things they noticed appearing in their collected images from field campaigns over the years. Mainly they are interested in monitoring the migration patterns of copepods, which they actually harvest here in Norway. And they noticed a problem in the collected images from their campaigns in terms of the presence of bubbles and other tiny marine organisms which they are not interested in. They are currently using discrete CNN classifiers for feature extraction and class assignment of the organisms. So here my verifier will come into play to verify the robustness of their classifier or the robustness of the detection.

Robustness verification for ROI from collected in situ images

Robustness verification for ROI from collected in situ images

How did you find the doctoral consortium and the AAAI conference experience in general?

The doctoral consortium was a good experience and the other attendees had a range of different backgrounds which was interesting. I personally liked how they assigned each group of participants with a mentor. I was lucky, because I got a mentor who was doing AI verification as well. It was good to get some reflections on my PhD work.

In terms of conferences more generally, my personal preference is for small community gatherings – the AI verification community is a really small one. Last year I attended CAV (the computer aided verification conference) in Zagreb to present the work on my first research direction in the symposium of AI verification. CAV is the A* conference for formal methods / formal theory. When I went to AAAI, I met lots of the people that had been at that CAV conference, so it was nice meeting again. One common problem for us AI verification researchers is that the people from the AI community think that we are doing really theoretical or mathematical stuff, so we don’t feel we fit well there. And the formal methods people are usually verifying software programs or stuff like that. So our research kind of touches on both communities. There was actually one tutorial and lab forum at AAAI about the neural network verification competition. It was a gathering for people working in both domains which was nice.

Abdelrahman Sayed during AAAI/SIGAI Doctoral Consortium poster session

Abdelrahman Sayed during AAAI/SIGAI Doctoral Consortium poster session

What do you like doing when you’re not working on your PhD?

I like cycling a lot. However, in the north of France, it’s always raining, and then when I come to Norway it’s often snowing. When the weather is good, or during summer, I cycle a lot and I like to travel by bicycle between cities or explore different cities. In the summer of 2021, me and a friend toured most of the small cities in the north of Italy by bike. I also like hiking, especially in the mountains.

References

Sayed, A.S., Formal verification of neural ode for safety evaluation in autonomous vehicles, AAAI-26 Doctoral Consortium (2026).

Sayed, A.S., Meyer, P.J., Ghazel, M., Mixed monotonicity reachability analysis of neural ode: A trade-off between tightness and efficiency, NeurIPS 2025 Workshop on Symmetry and Geometry in Neural Representations (2025).

Sayed, A.S., Meyer, P.J., Ghazel, M., Bridging neural ode and resnet: A formal error bound for safety verification, International Symposium on AI Verification. pp. 97–114. Springer (2025).

About Abdelrahman

|

Abdelrahman Sayed Sayed is a 3rd-year PhD researcher at Université Gustave Eiffel, advised by Pierre-Jean Meyer, Mohamed Ghazel, Asgeir J. Sørensen. His PhD research focuses on developing tools/methods for formal verification of neural ODE. He is a recipient of the prestigious Marie Skłodowska-Curie PhD Fellowship through CLEAR-Doc project, as well as an Erasmus Mundus Joint Master’s degree scholarship in Marine and Maritime Intelligent Robotics. Abdelrahman’s work has led him to receive many awards and recognition, including the Best Student Presentation award at the Underwater Technology Conference 2023, and the second place at NTNU Faculty of Engineering Innovation Competition 2023 for his Master’s thesis. |

tags: AAAI, AAAI Doctoral Consortium, AAAI2026, ACM SIGAI