ΑΙhub.org

Algorithm learns to correct 3D printing errors for different parts, materials and systems

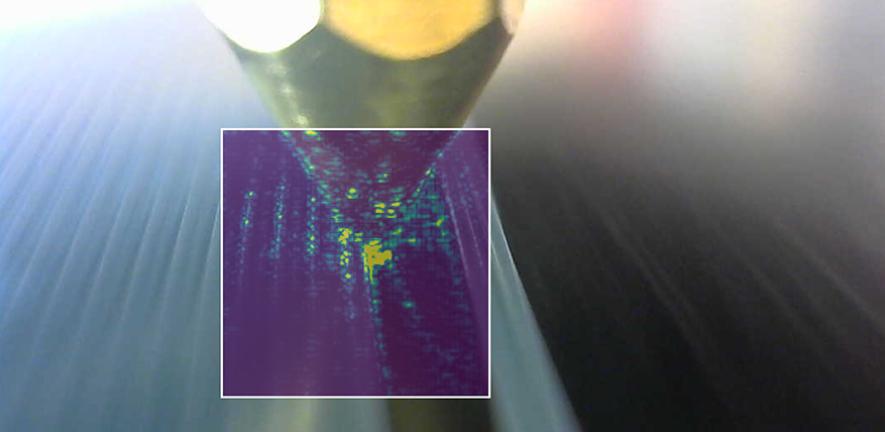

Example image of the 3D printer nozzle used by the machine learning algorithm to detect and correct errors in real time. Credit: Douglas Brion

Example image of the 3D printer nozzle used by the machine learning algorithm to detect and correct errors in real time. Credit: Douglas Brion

Engineers from the University of Cambridge have developed a machine learning algorithm that can detect and correct a wide variety of different errors in real time, and can be easily added to new or existing machines to enhance their capabilities. 3D printers using the algorithm could also learn how to print new materials by themselves. Details of their low-cost approach are reported in the journal Nature Communications.

3D printing has the potential to revolutionise the production of complex and customised parts, such as aircraft components, personalised medical implants, or even intricate sweets, and could also transform manufacturing supply chains. However, it is also vulnerable to production errors, from small-scale inaccuracies and mechanical weaknesses through to total build failures.

Currently, the way to prevent or correct these errors is for a skilled worker to observe the process. The worker must recognise an error (a challenge even for the trained eye), stop the print, remove the part, and adjust settings for a new part. If a new material or printer is used, the process takes more time as the worker learns the new setup. Even then, errors may be missed as workers cannot continuously observe multiple printers at the same time, especially for long prints.

“3D printing is challenging because there’s a lot that can go wrong, and so quite often 3D prints will fail,” said Dr Sebastian Pattinson from Cambridge’s Department of Engineering, the paper’s senior author. “When that happens, all of the material and time and energy that you used is lost.”

Engineers have been developing automated 3D printing monitoring, but existing systems can only detect a limited range of errors in one part, one material and one printing system.

“What’s really needed is a ‘driverless car’ system for 3D printing,” said first author Douglas Brion, also from the Department of Engineering. “A driverless car would be useless if it only worked on one road or in one town – it needs to learn to generalise across different environments, cities, and even countries. Similarly, a ‘driverless’ printer must work for multiple parts, materials, and printing conditions.”

Brion and Pattinson say the algorithm they’ve developed could be the ‘driverless car’ engineers have been looking for.

“What this means is that you could have an algorithm that can look at all of the different printers that you’re operating, constantly monitoring and making changes as needed – basically doing what a human can’t do,” said Pattinson.

The researchers trained a deep learning computer vision model by showing it around 950,000 images captured automatically during the production of 192 printed objects. Each of the images was labelled with the printer’s settings, such as the speed and temperature of the printing nozzle and flow rate of the printing material. The model also received information about how far those settings were from good values, allowing the algorithm to learn how errors arise.

“Once trained, the algorithm can figure out just by looking at an image which setting is correct and which is wrong – is a particular setting too high or too low, for example, and then apply the appropriate correction,” said Pattinson. “And the cool thing is that printers that use this approach could be continuously gathering data, so the algorithm could be continually improving as well.”

Using this approach, Brion and Pattinson were able to make an algorithm that is generalisable – in other words, it can be applied to identify and correct errors in unfamiliar objects or materials, or even in new printing systems.

“When you’re printing with a nozzle, then no matter the material you’re using – polymers, concrete, ketchup, or whatever – you can get similar errors,” said Brion. “For example, if the nozzle is moving too fast, you often end up with blobs of material, or if you’re pushing out too much material, then the printed lines will overlap forming creases.

“Errors that arise from similar settings will have similar features, no matter what part is being printed or what material is being used. Because our algorithm learned general features shared across different materials, it could say ‘Oh, the printed lines are forming creases, therefore we are likely pushing out too much material’.”

As a result, the algorithm that was trained using only one kind of material and printing system was able to detect and correct errors in different materials, from engineering polymers to even ketchup and mayonnaise, on a different kind of printing system.

In future, the trained algorithm could be more efficient and reliable than a human operator at spotting errors. This could be important for quality control in applications where component failure could have serious consequences.

“We’re turning our attention to how this might work in high-value industries such as the aerospace, energy, and automotive sectors, where 3D printing technologies are used to manufacture high-performance and expensive parts,” said Brion. “It might take days or weeks to complete a single component at a cost of thousands of pounds. An error that occurs at the start might not be detected until the part is completed and inspected. Our approach would spot the error in real time, significantly improving manufacturing productivity.”

The research was supported by the Engineering and Physical Sciences Research Council, Royal Society, Academy of Medical Sciences, and the Isaac Newton Trust.

The full dataset used to train the AI is freely available online.

Read the research in full

Generalisable 3D printing error detection and correction via multi-head neural networks

Douglas A. J. Brion & Sebastian W. Pattinson